OWASP Top 10 for Large Language Model Applications

21st January 2024OWASP stands for Open Web Application Security Project, and it is an online community dedicated to web application security. They are well known for their Top 10 Web Application Security Risks and late last year, they added a Top 10 for

Large Language Model (LLM) Applications.

Given that large language models made quite a splash last year, this was not before time. ChatGPT gained a lot of attention (OpenAI also has had DALL-E for generation of images for quite a while now), there are many others with Anthropic Claude and Perplexity also being mentioned more widely.

Figuring out what to do with any of these is not as easy as one might think. For someone more used to working with computer code, using natural language requests is quite a shift when you no longer have documentation that tells what can and what cannot be done. It is little wonder that prompt engineering has emerged as a way to deal with this.

Others have been plugging in LLM capability into chatbots and other applications, so security concerns have come to light, so far, I have not heard anything about a major security incident, but some are thinking already about how to deal with AI-suggested code that other already are using more and more.

Given all that, here is OWASP’s summary of their Top 10 for LLM Applications. This is a subject that is sure to draw more and more interest with the increasing presence of artificial intelligence in our everyday working and no-working lives.

LLM01: Prompt Injection

This manipulates an LLM through crafty inputs, causing unintended actions by the LLM. Direct injections overwrite system prompts, while indirect ones manipulate inputs from external sources.

LLM02: Insecure Output Handling

This vulnerability occurs when an LLM output is accepted without scrutiny, exposing backend systems. Misuse may lead to severe consequences such as Cross-Site Scripting (XSS), Cross-Site Request Forgery (CSRF), Server-Side Request Forgery (SSRF), privilege escalation, or remote code execution.

LLM03: Training Data Poisoning

This occurs when LLM training data is tampered, introducing vulnerabilities or biases that compromise security, effectiveness, or ethical behaviour. Sources include Common Crawl, WebText, OpenWebText and books.

LLM04: Model Denial of Service

Attackers cause resource-heavy operations on LLMs, leading to service degradation or high costs. The vulnerability is magnified due to the resource-intensive nature of LLMs and the unpredictability of user inputs.

LLM05: Supply Chain Vulnerabilities

LLM application lifecycle can be compromised by vulnerable components or services, leading to security attacks. Using third-party datasets, pre-trained models, and plugins can add vulnerabilities.

LLM06: Sensitive Information Disclosure

LLMs may inadvertently reveal confidential data in its responses, leading to unauthorized data access, privacy violations, and security breaches. It’s crucial to implement data sanitization and strict user policies to mitigate this.

LLM07: Insecure Plugin Design

LLM plugins can have insecure inputs and insufficient access control. This lack of application control makes them easier to exploit and can result in consequences such as remote code execution.

LLM08: Excessive Agency

LLM-based systems may undertake actions leading to unintended consequences. The issue arises from excessive functionality, permissions, or autonomy granted to the LLM-based systems.

LLM09: Overreliance

Systems or people overly depending on LLMs without oversight may face misinformation, miscommunication, legal issues, and security vulnerabilities due to incorrect or inappropriate content generated by LLMs.

LLM10: Model Theft

This involves unauthorized access, copying, or exfiltration of proprietary LLM models. The impact includes economic losses, compromised competitive advantage, and potential access to sensitive information.

Slackware & Derivatives

2nd November 2023There’s a saying out there about Slackware being the only distribution that’ll teach you about Linux. For one thing, it certainly has been around since near enough the dawn of Linux and I remember a colleague at The University of Edinburgh using it on one of the institution’s research PC’s. There is a hardcore aspect to the distro so it’s not for beginners and, whatever you do, don’t go expecting any easy way of updating the software that you install on your system.

Here is a Slackware-based distro that comes with the following desktop environments: Xfce, MATE, LXDE, Fluxbox, KDE and Ratpoison. It is interesting to see that GNOME has been given a wide berth with MATE included in its stead. This was created as a fork of Zenwalk when its originator decided to slim things down from where they had gone.

This is a Greek distro that is based on both Slackware and its derivative Salix. KDE and Openbox are the desktop choices for anyone who might be interested.

Along with the longstanding x86, releases (32- and 64-bit, by the way), there’s even an ARM version now. Early in 2012, the distro’s website disappeared because there wasn’t money to address a hardware failure. Thankfully, it’s back online now thanks to the support of its community, even if RAM remains an issue on the main server; that’s why a mirror site exists. The way this bump was resolved shows that even more technical variants of Linux have their fans and there’s something to be said for knowing more about the innards of the operating system too.

Whether you are an admirer of Slackware or not, this modular lightweight distro is intended to run on some very old computers. While I have no idea where they might find PC’s from around twenty years ago (Intel 486, anyone?) for testing that Slax runs on them, that hasn’t stopped the project allowing its wares to run on those at all. They even sell a USB stick drive with the software installed on there to fund the project if downloading the files is too much for you.

The acronym stands for Superb Mini Server and that provides the intent of this Italian distro. Slackware is its basis and the inclusion of Webmin is more than a strong hint that it is built for remote management too. Also, part of the package is the sorts of tooling that you’d need for a web server so it’s not just for file storage and there’s firewall management available too.

XFCE is the main desktop environment chosen for this distro and it too has been around for nearly twenty years. Things have slimmed down to a single 64-bit edition though legacy versions remain available from mirror sites.

Limiting Google Drive upload & synchronisation speeds using Trickle

9th October 2021Having had a mishap that lost me some photos in the early days of my dalliance with digital photography, I have been far more careful since then and that now applies to other files as well. Doing regular backups is a must that you find reiterated by many different authors and the current computing climate makes doing that more vital than it ever was.

So, as well as having various local backups, I also have remote ones in the form of OneDrive, Dropbox and Google Drive. These more correctly are file synchronisation services but disciplined use can make them useful as additional storage facilities in the interests of maintaining added resilience. There also are dedicated backup services that I have seen reviewed in the likes of PC Pro magazine but I have to make use of those.

Insync

Part of my process for dealing with new digital photo files is to back them up to Google Drive and I did that with a Windows client in the early days but then moved to Insync running on Linux Mint. One drawback to the approach is that this hogs the upload bandwidth of an internet connection that has yet to move to fibre from copper cabling. Having fibre connections to a local cabinet helps but a 100 KiB/s upload speed is easily overwhelmed and digital photo file sizes keep increasing. It does not help that I insist on using more flexible raw formats like DNG, CR2 or CR3 either.

Making fewer images could help to cut the load but I still come away from an excursion with many files because I get so besotted with my surroundings. This means that upload sessions take numerous hours and can extend across calendar days. Ultimately, this makes my internet connection far less usable so I want to throttle upload speed much like what is possible in the Transmission BitTorrent client or in the Dropbox client. Unfortunately, this is not available in Insync so I have tried using the trickle command instead and an example is below:

trickle -d 2000 -u 50 insync

Here, the upload speed is limited to 50 KiB/s while the download speed is limited to 2000 KiB/s. In my case, the latter of these hardly matters while the former leaves me with acceptable internet usability. Insync does not work smoothly with this, however, so occasional restarts are needed to keep file uploads progressing and CPU load also is higher. As rough as the user experience feels, uploads can continue in parallel with other work.

gdrive

One other option that I am exploring is the use of the command-line tool gdrive and this appears to work well with trickle. After downloading and installing the tool, getting going is a matter of issuing the following command and following the instructions:

gdrive about

On web servers, I even have the tool backing up things to Google Drive on a scheduled basis. Because of a Google Drive limitation that I have encountered not only with gdrive but also with Insync and Google’s own Windows Google Drive client, synchronisation only can happen with two new folders, one local and the other remote. Handily, gdrive supports the usual bash style commands for working with remote directories so something like the following will create a directory on Google Drive:

gdrive mkdir ttdc [ID for parent folder]

Here, the ID for the parent folder may be omitted but it can be obtained by going to Google Drive online and getting a link location by right-clicking on a folder and choosing the appropriate context menu item. This gets you something like the following and the required identifier is found between the last slash and the first question mark in the address string (so as not to share any real links, I made the address more general below):

https://drive.google.com/drive/folders/[remote folder ID]?usp=sharing

Then, synchronisation uses a command like the following:

gdrive sync upload [local folder or file path] [remote folder ID]

There also is the option to do a one-way upload and this is the form of the command used:

gdrive upload [local folder or file path] -p [remote folder ID]

Because every file or folder object has its own ID on Google Drive, it is possible to create two objects on there that appear to have the same name though that is sure to cause confusion even if you know what is happening. It is possible in each of the above to throttle them using trickle as well:

trickle -d 2000 -u 50 gdrive sync upload [local folder or file path] [remote folder ID]

trickle -d 2000 -u 50 gdrive upload [local folder or file path] -p [remote folder ID]

Handily, this works without the added drama seen with Insync and lends itself to scripting as well so it could be something that I will incorporate into my current workflow. One thing that needs to be watched is file upload failures but there may be ways to catch those and retry them so that would another thing that needs doing. This is built into Insync and it would be a learning opportunity if I was to stick with gdrive instead.

A little bit of abstraction

21st August 2021

Data science has remained in my awareness since 2017 though my work is more on its fringes in clinical research. In fact, I have been involved more in the standardisation and automation of more traditional data reporting than in the needs of data modelling such as data engineering or other similar disciplines. Much of this effort has meant the use of SAS, with which I have programmed since 2000 and for which I have a licence (an expensive commodity, it has to be said), but other technologies are being explored with R, Python and Julia being among them.

The change in technological scope does bring an element of excitement and new interest but there is also some sadness when tried and trusted technologies meet with newer competition and valued skills are no longer as career securing as they once were. Still, there is plenty of online training out there and I already have collected some of my thoughts on this. The learning continues and the need for repositioning is also clear.

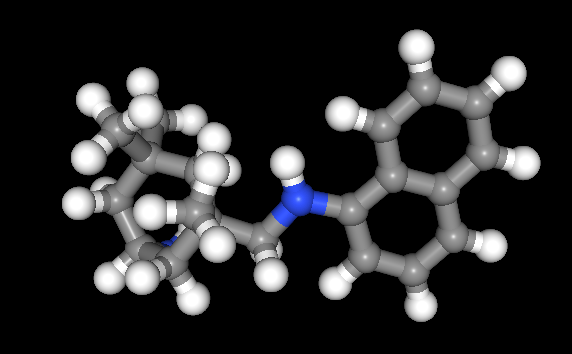

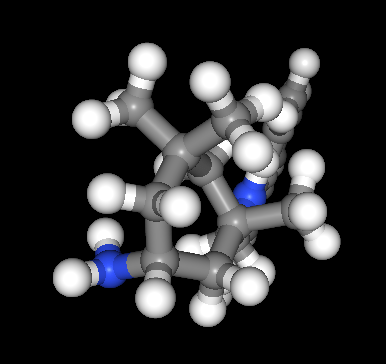

The journey also has brought some curios to my notice. One of these is This Person Does Not Exist, a website building photos of non-existent faces using machine learning. Recently, I learned of others like it such as This Artwork Does Not Exist, This Cat Does Not Exist, This Horse Does Not Exist, and This Chemical Does Not Exist. The last of these probably should be entitled “This Molecule Does Not Exist (Yet)” since it is a fictitious molecular structure that has been created and what you get is an actual moving image that spins it around in three-dimensional space. The one with dynamically generated abstract art is the main inspiration for this piece and is of more interest to me while the other two are more explanatory though the horse website is not so successful in its execution and one can ask why we need more cat pictures.

To some, the idea of creating fake pictures may feel a little foreboding and that especially applies to photos of people and the livelihoods of any content creators. Nevertheless, these sources of imagery have their legitimate uses such as decorating websites or brochures and that is where my interest is piqued. After all, there are some subjects where pictures can be scarce so any form of decoration that enlivens an article has to have some use. Technology websites like this one can feature images too with screenshots and device photos being commonplace but they can all look like each other, hence the need for a little more variety and having pictures often increases the choice of website themes as well since so many need images to make them work or stand out. As ever, being sparing with any new innovations remains in order so that is how I approach this matter as well.

Some books and other forms of documentation on R

11th September 2021The thrust of an exhortation from a computing handbook publisher comes to mind here: don’t just look things up on Google, read a book so you really understand what you are doing. Something like those words was used to sell an eBook on Github but the same sentiment applies to R or any other computing language. Using a search engine will get you going or add to existing knowledge but only a book or a training course will help to embed real competence.

In the case of R, there is a myriad of blogs out there that can be consulted as well as function and package documentation on RDocumentation or rrdr.io. For the former, R-bloggers or R Weekly can make good places to start while ones like Stats and R, Statistics Globe, STHDA, PSI’s VIS-SIG and anything from Posit (including their main blog as well as their AI one) can be worth consulting. Additionally, there is also RStudio Education and the NHS-R Community, which also have a Github repository together with a YouTube channel. Many packages have dedicated websites as well so there is no lack of documentation with all of these so here is a selection:

To come to the real subject of this post, R is unusual in that books that you can buy also have companions websites that contain the same content with the same structure. Whatever funds this approach (and some appear to be supported by RStudio itself by the looks of things), there certainly are a lot of books available freely online in HTML as you will see from the list below while a few do not have a print counterpart as far as I know:

R Programming for Data Science

R Markdown: The Definitive Guide

bookdown: Authoring Books and Technical Documents with R Markdown

blogdown: Creating Websites with R Markdown

pagedown: Create Paged HTML Documents for Printing from R Markdown

Dynamic Documents with R and knitr

Engineering Production-Grade Shiny Apps

Outstanding User Interfaces with Shiny

Happy Git and GitHub for the useR

Outstanding User Interfaces with Shiny

Engineering Production-Grade Shiny Apps

Many of the above have counterparts published by O’Reilly or Chapman & Hall, to name the two publishers that I have found so far. Aside from sharing these with you, there is also the personal motivation of having the collection of links somewhere so I can close tabs in my Firefox session. There are other web articles open in other tabs that I need to retain and share but these will need to do for now and I hope that you find them as useful as I do.

Self-learning new computing languages

10th April 2021Over the years, I have taught myself a number of computing languages with some coming in useful for professional work while others came in handy for website development and maintenance. The collection has grown to include HTML, CSS, XML, Perl, PHP and UNIX Shell Scripting. The ongoing pandemic allowed to me added two more to the repertoire: R and Python.

My interest in these arose from my work as an information professional concerned with standardisation and automation of statistical results delivery. To date, the main focus has been on clinical study data but ongoing changes in the life sciences sector could mean that I may need to look further afield so having extra knowledge never hurts. Though I have been a SAS programmer for more than twenty years, its predominance in the clinical research field is not what it was so that I am having to rethink things.

As it happens, I would like to continue working with SAS since it does so much and thoughts of leaving it after me bring sadness. It also helps to know what the alternatives might be and to reject some management hopes about any newcomers, especially with regard to the amount of code being produced and the quality of graphs being created. Use cases need to be assessed dispassionately even when emotions loom behind the scenes.

Both R and Python bring large scripting ecosystems with active communities so the attraction of their adoption makes a deal of sense. SAS is comparable in the scale of its own ecosystem though there are considerable differences and the platform is catching up when it comes to Data Science. The aforementioned open source languages may have had a head start but it seems that others are not standing still either. It is a time to have wider awareness and online conference attendance helps with that.

The breadth of what is available for any programming language more than stymies any attempt to create a truly all encompassing starting point and I have abandoned thoughts of doing anything like that for R. Similarly, I will not even try such a thing for Python. Consequently, this means that my sharing of anything learned will be in the form of discrete postings from time to time, especially given ho easy it is to collect numerous website links for sharing.

The learning has been facilitated by ongoing pandemic restrictions though things are opening up a little now. The pandemic also has given us public data that can be used for practice since much can be gained from having one’s own project instead of completing exercises from a book. Having an interesting data set with which to work is a must and COVID-19 data contain a certain self-interest as well though one always is mindful of the suffering and loss of life that has been happening since the pandemic first took hold.

More Linux Distributions

21st September 2012

If a certain Richard Stallman had his way, Linux would be called GNU/Linux because he wants GNU to have some of the credit, but we’re lazy creatures and we all call it Linux instead. What still amazes me is the number of Linux distributions that are out there. This list captures those that do not fit into other lists that you can find in the sidebar, so do look at the others as well.

Many fit into the desktop and server computing paradigms while a minority are very distinctive. It is easier to write about the latter than the former, though personal experiences do add to any narrative. It is tempting to think that everything has become static after more than thirty years, yet that may be foolish given the ongoing flux in the world of technology. Only change is ever a constant presence.

More in the Way of Privacy

The controversy about security agencies eavesdropping on internet communications has upset some and here are some distros offering anonymity and privacy. Of course, none of these should be used for unlawful purposes since there are those in less liberal countries who need invisibility to speak their minds.

It is harder and harder to create a Linux distro that is very different from the rest, but this one uses application virtualisation for added security. You can organise your software into different domains so that you work more securely when moving data between applications from different domains.

There is more than a hint of privacy-mindedness in this distro when you look long enough at what it offers. Cinnamon, MATE and Xfce desktop environments are part of the offer and there is added software for extra privacy and security.

This is an option for those who are worried about being tracked online. All internet connections are sent via the Tor network and it is run exclusively as a live distro from CD, DVD or USB stick drive too, so no trace is left on any PC. The basis is Debian and the distro’s name is an acronym: The Amnesiac Incognito Live System. For us living in a democratic country, the effort may seem excessive but that changes in other places where folk are not so fortunate. The use of Tor may not be perfect but it should help in combination with the use of different sessions for different tasks and encrypting any files. There even is an option to make the desktop appear like that of Windows XP for extra discreteness of use.

Most Linux distros that have enhanced security and anonymity as a feature are not installable on a PC, but that exactly is what’s unique about Whonix. It’s based on Debian but all internet connections go via the Tor network. The latter is called Whonix-Gateway with Whonix-Workstation being what you use to work on your system. It may sound like being overly careful but it has me intrigued.

Entertainment

In many ways, these are appliance distros for anyone who just wants an install-it-and-go approach to things. That works better with dedicated devices than with multipurpose machines, so that is one thing that needs to be kept in mind.

The idea behind this offering is what it offers console gamers. Legacy games and peripherals will work and there even is support for Raspberry Pi as well.

The main purpose of this distro is to offer a home for the KODI entertainment centre on PC and Raspberry Pi devices. It follows from the now defunct OpenELEC project, which ran into trouble when developers’ voices were not given a hearing.

The acronym stands for Open-Source Media Centre and there is KODI here too. Though the distro also is based on Debian, one is tempted to wonder why anyone would not just install that and install KODI on top of it. The answer possibly has something got to do with added user-friendliness for those who do not need to deal with such things.

Mandriva Offshoots

Mandrake once was a spin of Red Hat with a more user-friendly focus. In the days before the appearance of Ubuntu, it would have been a choice for those not wanting to overcome obstacles such as a level of hardware support that was much less than what we have today. Later, Mandrake became Mandriva following litigation and the acquisition of Conectiva in 2005. The organisation has declined since those heady days and it became defunct during 2015. Its legacy continues though in the form of two spin-off projects, so all the work of forebears has not been lost.

It was the uncertainty surrounding the future of Mandriva that originally caused this project to be started. Beginnings have been promising, so this is a one to watch, though you have to wonder if the now community-based OpenMandriva is stealing some of its limelight.

Of the pair that is listed here, it is OpenMandriva which is a continuation of the now-defunct Mandriva. Seeing how things progress for a project with user-friendliness at its heart will be of interest in these days when Debian, Ubuntu and Linux Mint are so pervasive. Even with those, there are KDE options, so there is a challenge in place.

Anything Russian may not be everyone’s choice given the state of world affairs at the time of writing, yet this still is an offshoot of Mandriva so it gets a mention in this list. Desktop environment options include KDE, XFCE and LXQt and there are various use cases covered by a range of solutions.

Others

Not every distro falls in the above categories, and some that you find here may surprise you. There are some better-known names like openSUSE that go their way.

Aside from the founder’s dislike of ISO disk images for whatever reason, this distro has its own eccentricities. For example, it is container-friendly, runs in memory as root and much more. This is branded as an experimental distro, and it is that in many ways.

This project creates respins of openSUSE for the sake of a more refined experience. For instance, there are live booting ISO images as well as inclusion of media codecs. There is plenty of choice too when it comes to desktop environments.

From what I have seen, this project seems to be supporting the same needs as Arch, albeit with all software needing to be compiled, so there’s more of a DIY approach. The wiki also comes in handy for those users.

Billing itself as a lean independent distribution focussing on QT and KDE, this is built from the ground up without any dependence on other distros. Some tools, like pacman, naturally come from elsewhere in this otherwise standalone offering.

Here is another distro apart from Ubuntu that has an African name, the Zulu for big chief this time around. It came to my notice among the pages of the now defunct Micro Mart magazine and uses MATE, XFCE, Enlightenment and KDE as its desktop environment choices.

SuSE Linux was one of the first Linux distros that I started to explore and I even had it loaded on my home PC as a secondary operating system for quite a while too before my attention went elsewhere. Only for a PC Plus cover-mounted CD, it never might have discovered it and it bested Red Hat, which was as prominent then, as Fedora is today. When SuSE fell into Novell’s hands, it became both openSUSE and SuSE Linux Enterprise Edition. The former is the community and the latter is what Novell, now itself an Attachmate Group company, offers to business customers. As it happens, I continue to keep an eye on openSUSE and even had it on a secondary PC before font resolution deficiencies had me looking elsewhere. While it’s best known for its KDE variant, there is a GNOME one too and it is this that I have been examining.

There was a time when this was being touted as an Ubuntu killer but it never seems to have made good on that promise. Recent troubles within the project haven’t helped either, especially with a long wait between releases.

This Turkish distro recently got reviewed in Linux Format and they were not satisfied with its documentation. It does not help that the website is not in English, so you need a translation tool of your choosing for this one.

Though there also is a spin using the MATE desktop environment, this distro is perhaps better known as the home for the Budgie desktop environment. All of this is for computing and not its business or enterprise counterpart. There is nothing to say against that and may make it feel a little more friendly.

The name sounded similar for some reason and I reckon that’s because Samsung has smartphones running Tizen on sale. The whole point of the project is to power mobile computing platforms with only the mention of netbooks sullying an otherwise non-PC target market that includes tablets and TV’s. It’s overseen by the Linux Foundation too.

Changing file timestamps using Windows PowerShell

29th October 2014Recently, a timestamp got changed on an otherwise unaltered file on me and I needed to change it back. Luckily, I found an answer on the web that used PowerShell to do what I needed and I am recording it here for future reference. The possible commands are below:

$(Get-Item temp.txt).creationtime=$(Get-Date "27/10/2014 04:20 pm")

$(Get-Item temp.txt).lastwritetime=$(Get-Date "27/10/2014 04:20 pm")

$(Get-Item temp.txt).lastaccesstime=$(Get-Date "27/10/2014 04:20 pm")

The first of these did not interest me since I wanted to leave the file creation date as it was. The last write and access times were another matter because these needed altering. The Get-Item commandlet brings up the file, so its properties can be set. Here, these include creationtime, lastwritetime and lastaccesstime. The Get-Date commandlet reads in the provided date and time for use in the timestamp assignment. While PowerShell itself is case-insensitive, I have opted to show the camel case that is produced when you are tabbing through command options for the sake of clarity.

The Get-Item and Get-Date have aliases of gi and gd, respectively and the Get-Alias commandlet will show you a full list while Get-Command (gcm) gives you a list of commandlets. Issuing the following gets you a formatted list that is sent to a text file:

gcm | Format-List > temp2.txt

There is some online help but it is not quite as helpful as it ought to be so I have popped over to Microsoft Learn whenever I needed extra enlightenment. Here is a command that pops the full thing into a text file:

Get-Help Format-List -full > temp3.txt

In fact, getting a book might be the best way to find your way around PowerShell because of all its commandlets and available objects.

For now, other commands that I have found useful include the following:

Get-Service | Format-List

New-Item -Name test.txt -ItemType "file"

The first of these gets you a list of services while the second creates a new blank text file for you and it can create new folders for you too. Other useful commandlets are below:

Get-Location (gl)

Set-Location (sl)

Copy-Item

Remove-Item

Move-Item

Rename-Item

The first of the above is like the cwd or pwd commands that you may have seen elsewhere in that the current directory location is given. Then, the second will change your directory location for you. After that, there are commandlets for copying, deleting, moving and renaming files. These also have aliases so users of the legacy Windows command line or a UNIX or Linux shell can use something that is familiar to them.

Little fixes like the one with which I started this piece are all very good to know but it is in scripting that PowerShell really is said to show its uses. Having seen the usefulness of such things in the world on Linux and UNIX, I cannot disagree with that and PowerShell has its own IDE too. That may be just as well given how much there is to learn. That especially is the case when you might need to issue the following command in a PowerShell session opened using the Run as Administrator option just to get the execution as you need it:

Set-ExecutionPolicy RemoteSigned

Issuing Get-ExecutionPolicy will show you if this is needed when the response is: Restricted. A response of RemoteSigned shows you that all is in order, though you need to check that any script you then run has no nasty payload in there, which is why execution is restrictive in the first place. This sort of thing is yet another lesson to be learnt with PowerShell.

Refurbished Computers

12th July 2014

While I never have been a home user of refurbished or second hand kit, there are those who do and there do appear to be some bargains to be had. For some reason, I get the sense that computing and photographic hardware seems to heading more upmarket as time goes on so it may be that this becomes the only way of getting cheaper computers unless you stick with Chromebooks and their like. Interestingly, the now defunct Micro Mart magazine did a feature on the subject and even Apple has legitimised the idea with its presence.

Manufacturers

With the premium reputation that Apple has, the chance of bagging any sort of a bargain from them is too good to overlook and they have had a refurbished goods store for longer than many. There are no iPhones here but Macs, iPads and iPods are made available in this way so it is worth a look. The chance of a cheaper Mac of some sort is a tempting idea.

A colleague of mine at work swears by this so much that it is where he looked when buying a laptop for his father. There are home and business sections too so even servers are available along with laptop and desktop PC’s as well as tablets.

Resellers

This is a computer kit reseller who I have never used so far but there have been qualms expressed about their customer service. Like many, they too have a clearance section so it may be worth a look if fancy taking a little risk.

The mainstay of this lot are pre-used computers and they have been around a while too, even if they disappeared from the web for a while at one stage. They also had a shop near Manchester’s Piccadilly train station though I am left wondering if any of the apparent bargains tempted anyone.

Specialists

Giga Refurb

MicroDream

Pure IT Refurbished

Tier1online.com

Itzoo

These have the quality of their work approved by Microsoft themselves so there should be some confidence here. With Microsoft having put Windows XP out to grass, Windows 7 is being promoted on machines with at least Intel Core 2 Duo CPU’s and prices can be very reasonable too.

Using a BASH command to count the files in a directory

12th March 2024As part of my backup workflow, I maintain a machine running OpenMediaVault that I only power up when backups are to be performed. Typically, this often happens when I have new photography images to load, and I have a NAS that acts as an online backup system. The OpenMediaVault machine is a near-offline counterpart to the NAS for added safety.

Recently, I needed to check on the number of image files in a directory from an SSH session because of a need to create a new repository for 2024. Some files from this year had ended up in the 2023 one, and I needed to be sure that nothing from last year ended in the 2024 folder, or vice versa. Getting a file count from a trusted source was a quick way of doing exactly this.

Due to clumsiness with the NAS, I had to do this using the OpenMediaVault machine. While I could go mounting drives on an interim basis, it was quicker to work from a BASH session. The trick was to use the wc command for counting the lines output by an invocation of the ls command. An example follows:

ls -l | wc -l

The -l (as in l for Lima) switch forces wc to count lines, while the counterpart (same letter) for ls forces it to list the contents in long form, one item per line. Thus, counting the number of lines gets you the count of the number of files. The call to the ls command can be customised to add other things life the number of dot files, but the above was enough for my purposes. When the files in both 2023 directories matched, I was satisfied that all was in order.