TOPIC: MOTHERBOARD

Upheaval and miniaturisation

The ongoing AI boom got me refreshing my computer assets. One was a hefty upgrade to my main workstation, still powered by Linux. Along the way, I learned a few lessons:

- Processing with LLM's only works on a graphics card when everything can remain within its onboard memory. It is all too easy to revert to system memory and CPU usage, given the amount of memory you get on consumer graphics cards. That applies even with the latest and greatest from Nvidia, when the main use case is for gaming. Things become prohibitively expensive when you go on from there.

- Even with water cooling, keeping a top of the range CPU cool and its fans running quietly remains a challenge, more so than when I last went for a major upgrade. It takes time for things to settle down.

- My Iiyama monitor now feels flaky with input from the latest technology. This is enough to make me look for a replacement, and it is waking up from dormancy that is the real issue. While it was always slow, plugging out from mains electricity and then back in again is a hack that is needed all too often.

- KVM switches may need upgrading to work with the latest graphical input. The monitor may have been a culprit with the problems that I was getting, yet things were smoother once I replaced the unit that I had been using with another that is more modern.

- AMD Ryzen 9 chips now have onboard graphics, a boon when things are not proceeding too well with a dedicated graphics card. Even though this was not the case when the last major upgrade happened, there were no issues like what I faced this time around.

- Having LED's on a motherboard to tell what might be stopping system startup is invaluable. This helped in July 2021 and averted confusion this time around as well. While only four of them were on offer, knowing which of CPU, DRAM, GPU or system boot needs attention is a big help.

- Optical drives are not needed any longer. Booting off a USB drive was enough to get Linux Mint installed, once I got the image loaded on there properly. Rufus got used, and I needed to select the low-level writing option before things proceeded as I had hoped.

Just like 2021, the 2025 upgrade cycle needed a few weeks for everything to settle down. The previous cycle was more challenging, and this was not just because of an accompanying heatwave. The latest one was not so bedevilled.

Given the above, one might be tempted to go for a less arduous path, like my acquisition of an iMac last year for another place that I own. After all, a Mac Mini packs in quite a lot of power, and it is not the only miniature option. Now that I have one, I have moved image processing off the workstation and onto it. The images are stored on the Linux machine and edited on the Mac, which has plenty of memory and storage of its own. There is also an M4 chip, so processing power is not lacking either.

It could have been used for work affairs, yet I acquired a Geekom A8 for just that. Though seeking work as I write this, my being an incorporated freelancer means that having a dedicated machine that uses my main monitor has its advantages. Virtualisation can allow drift from business affairs to business matters, that is not so easy when a separate machine is involved. There is no shortage of power either with an AMD Ryzen 9 8945HS and Radeon 780M Graphics on board. Add in 32 GB of memory and 2 TB of storage and all is commodious. It can be surprising what a small package can do.

The Iiyama's travails also pop up with these smaller machines, less so on the Geekom than with the Mac. The latter needs the HDMI cable to be removed and reinserted after a delay to sort out things. Maybe that new monitor may not be such an off the wall idea after all.

Best left until later in the year?

In the middle of last year, my home computing experience was one of feeling displaced. A combination of a stupid accident and a power outage had rendered my main PC unusable. What followed was an enforced upgrade that used a combination that was familiar to me: Gigabyte motherboard, AMD CPU and Crucial memory. However, assembling that lot and attaching components from the old system from the old system resulted in the sound of whirring fans but nothing appearing on-screen. Not having useful beeps to guide me meant that it was a case of undertaking educated guesswork until the motherboard was found to be at fault.

In a situation like this, a better developed knowledge of electronics would have been handy and might have saved me money too. As for the motherboard, it is hard to say whether it was a faulty set from the outset or whether there was a mishap along the way, either due to ineptitude with static or incompatibility with a power supply. What really tells the tale on the mainboard was the fact that all the other components are working well in other circumstances, even that old power supply.

A few years back, I had another experience with a problematic motherboard, an Asus this time, that ate CPU's and damaged a hard drive before I stabilised things. That was another upgrade attempted in the first half of the year. My first round of PC building was in the third quarter of 1998 and that went smoothly once I realised that a new case was needed. Similarly, another PC rebuild around the same time of year in 2005 was equally painless. Based on these experiences, I should not be blamed for waiting until later in the year before doing another rebuild, preferably a planned one rather than an emergency.

Of course, there may be another factor involved too. The hint was a non-working Sony DVD writer that was acquired early last year when it really was obvious that we were in the middle of a downturn. Could older unsold inventory be a contributor? Well, it fits in with seeing poor results twice, In addition, it would certainly tally with a problematical PC rebuild in 2002 following the end of the Dot-com bubble and after the deadly Al-Qaeda attack on New York's World Trade Centre. An IBM hard drive that was acquired may not have been the best example of the bunch, and the same comment could apply to the Asus motherboard. Though the resulting construction may have been limping, it was working tolerably.

In contrast, last year's episode had me launched into using a Toshiba laptop and a spare older PC for my needs, with an external hard drive enclosure used to extract my data onto other external hard drives to keep me going. While it felt like a precarious arrangement, it was a useful experience in ways too.

There was cause for making acquaintance with nearby PC component stores that I hadn't visited before, and I got to learn about things that otherwise wouldn't have come my way. Using an external hard drive enclosure for accessing data on hard drives from a non-functioning PC is one of these. Discovering that it is possible to boot from external optical and hard disk drives came as a surprise too and will work so long as there is motherboard support for it.

Another experience came from a crisis of confidence that had me acquiring a bare-bones system from Novatech and populating it with optical and hard disk drives. Then, I discovered that I have no need for power supplies rated more than 300 watts (around 200 W suffices). Turning my PC off more often became a habit, friendly both to the planet and to household running costs too.

Then, there's the beneficial practice of shopping locally, which can suffice. You may not get what PC magazines stick on their hot lists, but shopping online for those pieces doesn't guarantee success either. All of these were useful lessons and, while I'd rather not throw away good money after bad, it goes to show that even unsuccessful acquisitions had something to offer in the form of learning opportunities. Whether you consider that is worthwhile is up to you.

Still able to build PC systems

This weekend has been something of a success for me on the PC hardware front. Earlier this year, a series of mishaps rendered my former main home PC unusable; it was a power failure that finished it off for good. My remedy was a rebuild using my then usual recipe of a Gigabyte motherboard, AMD CPU and crucial memory. However, assembling the said pieces never returned the thing to life and I ended up in no man's land for a while, dependent on and my backup machine and laptop. That wouldn't have been so bad but for the need for accessing data from the old behemoth's hard drives, but an external drive housing set that in order. Nevertheless, there is something unfinished about work with machines having a series of external drives hanging off them. That appearance of disarray was set to rights by the arrival of a bare-bones system from Novatech in July, with any assembly work restricted to the kitchen table. There was a certain pleasure in seeing a system come to life after my developing a fear that I had lost all of my PC building prowess.

That restoration of order still left finding out why those components bought earlier in the year didn't work together well enough to give me a screen display on start-up. Having electronics testing equipment and the knowledge of its correct use would make any troubleshooting far easier, but I haven't got these. While there is a place near to me where I could go for this, you are left wondering what might be said to a PC build gone wrong. Of course, the last thing that you want to be doing is embarking on a series of purchases that do not resolve the problem, especially in the current economic climate.

One thing to suspect when all doesn't turn out as hoped is the motherboard and, for whatever reason, I always suspect it last. It now looks as if that needs to change after I discovered that it was the Gigabyte motherboard that was at fault. Whether it was faulty from the outset or it came a cropper with a rogue power supply or careless with static protection is something that I'll never know. An Asus motherboard did go rogue on me in the past, and it might be that it ruined CPU's and even a hard drive before I laid it to rest. Its eventual replacement put a stop to a year of computing misfortune and kick-started my reliance on Gigabyte. While that faith is under question now, the 2009 computing hardware mishap seems to be behind me and any PC rebuilds will be done on tables and motherboards will be suspected earlier when anything goes awry.

Returning to the present, my acquisition of an ASRock K10N78 and subsequent building activities has brought a new system using an AMD Phenom X4 CPU and 4 GB of memory into use. In fact, I am writing these very words using the thing. It's all in a new TrendSonic case too (placing an elderly behemoth into retirement) and with a SATA hard drive and DVD writer. Since the new motherboard has onboard audio and graphics, external cards are not needed unless you are an audiophile and/or a gamer; for the record, I am neither. Those additional facilities make for easier building and fault-finding should the undesirable happen.

The new box is running the release candidate of Ubuntu 9.10, which seems to be working without a hitch too. Since earlier builds of 9.10 broke in their VirtualBox VM, you should understand the level of concern that this aroused in my mind; the last thing that you want to be doing is reinstalling an operating system because its booting capability breaks every other day. Thankfully, the RC seems to have none of these rough edges, so I can upgrade the Novatech box, still my main machine and likely to remain so for now, with peace of mind when the time comes.

Booting from external drives

Sticking with older hardware may mean that you miss out on the possibilities offered by later kit, and being able to boot from external optical and hard disk drives was something of which I learned only recently. Like many things, a compatible motherboard and my enforced summer upgrade means that I have one with the requisite capabilities.

There is usually an external DVD drive attached to my main PC, so that allowed the prospect of a test. A bit of poking around in the BIOS settings for the Foxconn motherboard was sufficient to get it looking at the external drive at boot time. Popping in a CrunchBang Linux live DVD was all that was needed to prove that booting from a USB drive was a goer. That CrunchBang is a minimalist variant of Ubuntu helped for acceptable speed at system startup and afterwards.

Having lived off them while in home PC limbo, the temptation to test out the idea of installing an operating system on an external HD and booting from that is definitely there, though I think that I'll be keeping mine as backup drives for now. Still, there's nothing to stop me installing an operating system onto of them and giving that a whirl sometime. Of course, speed constraints mean that any use of such an arrangement would be occasional but, in the event of an emergency, such a setup could have its uses and tide you over for longer than a Live CD or DVD. Having the chance to poke around with an alternative operating system as it might exist on a real PC has its appeal too, and avoids the need for any partitioning and other chores that dual booting would require. After all, there's only so much testing that can be done in a virtual machine.

From laptop limbo to a new desktop: A weekend restoration of computing order

This weekend, I finally put my home computing displacement behind me. My laptop had become my main PC, with a combination of external hard drives and an Octigen external hard drive enclosure keeping me motoring in laptop limbo. Having had no joy in the realm of PC building, I decided to go down the partially built route and order a bare-bones system from Novatech. That gave me a Foxconn case and motherboard loaded up with an AMD 7850 dual-core CPU and 2 GB of RAM. With the motherboard offering onboard sound and video capability, all that was needed was to add drives. I added no floppy drive but instead installed a SATA DVD Writer (not sure that it was a successful purchase, though, but that can be resolved at my leisure) and the hard drives from the old behemoth that had been serving me until its demise. A session of work on the kitchen table and some toing and froing ensued as I inched my way towards a working system.

Once I had set all the expected hard disks into place, Ubuntu was capable of being summoned to life, with the only impediment being an insistence of scanning the 1 TB Western Digital and getting stuck along the way. Not having the patience, I skipped this at start up and later unmounted the drive to let fsck to do its thing while I got on with other tasks; the hold up had been the presence of VirtualBox disk images on the drive. Speaking of VirtualBox, I needed to scale back the capabilities of Compiz, so things would work as they should. Otherwise, it was a matter of updating various directories with files that had appeared on external drives without making it into their usual storage areas. Windows would never have been so tolerant and, as if to prove the point, I needed to repair an XP installation in one of my virtual machines.

In the instructions that came with the new box, Novatech stated that time was a vital ingredient for a build, and they weren't wrong. While the delivery arrived at 09:30, I later got a shock when I saw the time to be 15:15! However, it was time well spent when I noticed the speed increase on putting ImageMagick through its paces with a Perl script. In time, I might get brave and be tempted to add more memory to get up to 4 GB; the motherboard may only have two slots, but that's not such a problem with my planning on sticking with 32-bit Linux for a while to come. My brief brush with its 64-bit counterpart revealed some roughness that warded me off for a little while longer. For now, I'll leave well alone and allow things to settle down again. Lessons for the future remain, over which I may even mull in another post...

Why lateral thinking beats panic when technology breaks

Last night, something idiotic happened to me: I tripped up in my main PC's cables and brought the behemoth crashing about the place. There was some resulting damage, with the keyboard PS/2 socket being put out of action and a busted USB port and mouse. When this happens, thoughts take on the form of a runaway train and the prospect of acquiring a new motherboard and assorted expensive paraphernalia trot into your mind; there are other things that more need my cash. Of course, the last time to be making such big decisions on computer components is when a mental maelstrom has descended upon you.

Eventually, I got myself away from the brink and lateral thinking began to take over. What helped was that most of the system is unaffected, enabling me to write this post with it. While a spare will work for now, a new ergonomic mouse is on order, cheaper alternatives to the keyboard conundrum have come into play. If PS/2 wasn't an option, then USB remains one, and that was the line of attack that was taken.

It involved a visit to the nearest branch of PC World after work, from where I came away with a new USB hub and a USB-compatible keyboard for less than the price of a new AM2+ Gigabyte motherboard that would have served my needs. Though an otherwise functional Trust keyboard may have been retired, that was a less expensive option than a full PC rebuild, which I may still need to do, albeit with far less immediacy than what flashed before my eyes within the last 24 hours. In fact, acquiring some cable ties should be higher on the acquisition wish list to avoid cable-induced tumbles in the future. It really does pay to be able to step back and see things from a wider perspective.

Adding a new hard drive to Ubuntu

While this is a subject that I thought that I had discussed on this blog before, I can't seem to find any reference to it now. Instead, I have discussed the subject of adding hard drives to Windows machines a while back, which might explain what I was thinking. Trusting the searchability of what you find on here, I'll go through the process.

The rate at which digital images were filling my hard disks brought all of this to pass. Because even extra housekeeping could not stop the collection growing, I went and ordered a 1TB Western Digital Caviar Green Power from Misco. City Link did the honours with the delivery, and I can credit their customer service for organising that without my needing to get to the depot to collect the thing; that was a refreshing experience that left me pleasantly surprised.

For the most of the time, hard drives that I have had generally got on with the job. However, there was one experience from a time laden with computing mishaps that has left me wary. Assured by good reviews, I went and got myself an IBM DeskStar and its reliability didn't fill me with confidence. Though the business was acquired by Hitachi equivalents, that means that I am touching their version of the same product line either. Travails with an Asus motherboard put me off that brand around the same time as well; I now blame it for going through a succession of AMD Athlon CPU's on me.

The result of that episode is that I have a tendency to go for brands that I can trust from personal experience. Western Digital falls into this category, as does Gigabyte for motherboards, which explains my latest hard drive purchasing decision. That's not to say that other hard drive makers wouldn't satisfy my needs, since I have had no problems with disks from Maxtor or Samsung. For now though, I am sticking with those makers that I know until they leave me down, something that I hope never happens.

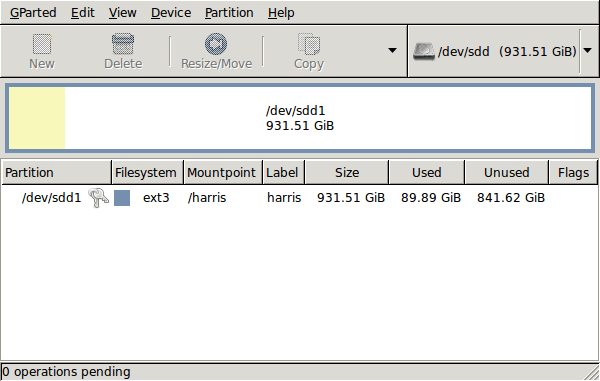

GParted running on Ubuntu

Anyway, let's get back to installing the hard drive. The physical side of the business was the usual shuffle within the PC to add the SATA drive before starting up Ubuntu. From there, it was a matter of firing up GParted (System > Administration > Partition Editor on the menus if you already have it installed). The next step was to find the new empty drive and create a partition table on it. At this point, I selected msdos from the menu before proceeding to set up a single ext3 partition on the drive. You need to select Edit > Apply All Operations from the menus to set things into motion before sitting back and waiting for GParted to do its thing.

After the GParted activities, the next task is to set up automatic mounting for the drive to make it available every time that Ubuntu starts up. The first thing to be done is to create the folder that will be the mount point for your new drive, /newdrive in this example. This involves editing /etc/fstab with superuser access to add a line like the following with the correct UUID for your situation:

UUID="32cf775f-9d3d-4c66-b943-bad96049da53" /newdrive ext3 defaults,noatime,errors=remount-ro

You can also add a comment like "# /dev/sdd1" above that so that you know what's what in the future. To get the actual UUID that you need to add to fstab, issue a command like one of those below, changing /dev/sdd1 to what is right for you:

sudo vol_id /dev/sdd1 | grep "UUID=" /* Older Ubuntu versions */

sudo blkid /dev/sdd1 | grep "UUID=" /* Newer Ubuntu versions *

This is the sort of thing that you get back, and the part beyond the "=" is what you need:

ID_FS_UUID=32cf775f-9d3d-4c66-b943-bad96049da53

Once all of this has been done, a reboot gets done to mount the device. Once that is complete, you then need to set up folder permissions as required before you can use the drive. This part gets me firing up Nautilus, using gksu and adding myself to the user group in the Permissions tab of the Properties dialogue for the mount point (/newdrive, for example). After that, I issued something akin to the following command to set global permissions:

chmod 775 /newdrive

With that, I had completed what I needed to do to get the WD drive going under Ubuntu. After that IBM DeskStar experience, the new drive remains on probation but moving some non-essential things on there has allowed me to free some space elsewhere and carry out a reorganisation. Further consolidation will follow while I hope that the new 931.51 GiB (binary gigabytes or 102410241024 rather the decimal gigabytes (1,000,000,000) preferred by hard disk manufacturers) will keep me going for a good while before I need to add extra space again.