Using a BASH command to count the files in a directory

12th March 2024As part of my backup workflow, I maintain a machine running OpenMediaVault that I only power up when backups are to be performed. Typically, this often happens when I have new photography images to load, and I have a NAS that acts as an online backup system. The OpenMediaVault machine is a near-offline counterpart to the NAS for added safety.

Recently, I needed to check on the number of image files in a directory from an SSH session because of a need to create a new repository for 2024. Some files from this year had ended up in the 2023 one, and I needed to be sure that nothing from last year ended in the 2024 folder, or vice versa. Getting a file count from a trusted source was a quick way of doing exactly this.

Due to clumsiness with the NAS, I had to do this using the OpenMediaVault machine. While I could go mounting drives on an interim basis, it was quicker to work from a BASH session. The trick was to use the wc command for counting the lines output by an invocation of the ls command. An example follows:

ls -l | wc -l

The -l (as in l for Lima) switch forces wc to count lines, while the counterpart (same letter) for ls forces it to list the contents in long form, one item per line. Thus, counting the number of lines gets you the count of the number of files. The call to the ls command can be customised to add other things life the number of dot files, but the above was enough for my purposes. When the files in both 2023 directories matched, I was satisfied that all was in order.

Moves to Hugo

30th November 2022What amazes me is how things can become more complicated over time. As long as you knew HTML, CSS and JavaScript, building a website was not as onerous as long as web browsers played ball with it. Since then, things have got easier to use but more complex at the same time. One example is WordPress: in the early days, themes were much simpler than they are now. The web also has got more insecure over time, and that adds to complexity as well. It sometimes feels as if there is a choice to make between ease of use and simplicity.

It is against that background that I reassessed the technology that I was using on my public transport and Irish history websites. The former used WordPress, while the latter used Drupal. The irony was that the simpler website was using the more complex platform, so the act of going simpler probably was not before time. Alternatives to WordPress were being surveyed for the first of the pair, but none had quite the flexibility, pervasiveness and ease of use that WordPress offers.

There is another approach that has been gaining notice recently. One part of this is the use of Markdown for web publishing. This is a simple and distraction-free plain text format that can be transformed into something more readable. It sees usage in blogs hosted on GitHub, but also facilitates the generation of static websites. The clutter is absent for those who have no need of the Gutenberg Editor on WordPress.

With the content written in Markdown, it can be fed to a static website generator like Hugo. Using defined templates and fixed assets like CSS together with images and other static files, it can slot the content into HTML files very speedily since it is written in the Go programming language. Once you get acclimatised, there are no folder structures that cannot be used, so you get full flexibility in how you build out your website. Sitemaps and RSS feeds can be built at the same time, both using the same input as the HTML files.

In a nutshell, it automates what once needed manual effort used a code editor or a visual web page editor. The use of HTML snippets and layouts means that there is no necessity for hand-coding content, like there was at the start of the web. It also helps that Bootstrap can be built in using Node, so that gives a basis for any styling. Then, SCSS can take care of things, giving even more automation.

Given that there is no database involved in any of this, the required information has to be stored somewhere, and neither the Markdown content nor the layout files contain all that is needed. The main site configuration is defined in a single TOML file, and you can have a single one of these for every publishing destination; I have development and production servers, which makes this a very handy feature. Otherwise, every Markdown file needs a YAML header where titles, template references, publishing status and other similar information gets defined. The layouts then are linked to their components, and control logic and other advanced functionality can be added too.

Because static files are being created, it does mean that site searching and commenting or contact pages cannot work they would on a dynamic web platform. Often, external services are plugged in using JavaScript. One that I use for contact forms is Getform.io. Then, Zapier has had its uses in using the RSS feed to tweet site updates on Twitter when new content gets added. Though I made different choices, Disqus can be used for comments and Algolia for site searching. Generally, though, you can find yourself needing to pay, particularly if you need to remove advertising or gain advanced features.

Some comments service providers offer open source self-hosted options, but I found these difficult to set up and ended up not offering commenting at all. That was after I tried out Cactus Comments only to find that it was not discriminating between pages, so it showed the same comments everywhere. There are numerous alternatives like Remark42, Hyvor Talk, Commento, FastComments, Utterances, Isso, Mouthful, Muut and HyperComments but trying them all out was too time-consuming for what commenting was worth to me. It also explains why some static websites even send readers to Twitter if they have something to say, though I have not followed this way of working.

For searching, I added a JavaScript/JSON self-hosted component to the transport website, and it works well. However, it adds to the size of what a browser needs to download. That is not a major issue for desktop browsers, but the situation with mobile browsers is such that it has a sizeable effect. Testing with PageSpeed and Lighthouse highlighted this, even if I left things as they are. The solution works well in any case.

One thing that I have yet to work out is how to edit or add content while away from home. Editing files using an SSH connection is as much a possibility as setting up a Hugo publishing setup on a laptop. After that, there is the question of using a tablet or phone, since content management systems make everything web based. These are points that I have yet to explore.

As is natural with a code-based solution, there is a learning curve with Hugo. Reading a book provided some orientation, and looking on the web resolved many conundrums. There is good documentation on the project website, while forum discussions turn up on many a web search. Following any research, there was next to nothing that could not be done in some way.

Migration of content takes some forethought and took quite a bit of time, though there was an opportunity to carry some housekeeping as well. The history website was small, so copying and pasting sufficed. For the transport website, I used Python to convert what was on the database into Markdown files before refining the result. That provided some automation, but left a lot of work to be done afterwards.

The results were satisfactory, and I like the associated simplicity and efficiency. That Hugo works so fast means that it can handle large websites, so it is scalable. The new Markdown method for content production is not problematical so far apart from the need to make it more portable, and it helps that I found a setup that works for me. This also avoids any potential dealbreakers that continued development of publishing platforms like WordPress or Drupal could bring. For the former, I hope to remain with the Classic Editor indefinitely, but now have another option in case things go too far.

Limiting Google Drive upload & synchronisation speeds using Trickle

9th October 2021Having had a mishap that lost me some photos in the early days of my dalliance with digital photography, I have been far more careful since then and that now applies to other files as well. Doing regular backups is a must that you find reiterated by many different authors and the current computing climate makes doing that more vital than it ever was.

So, as well as having various local backups, I also have remote ones in the form of OneDrive, Dropbox and Google Drive. These more correctly are file synchronisation services but disciplined use can make them useful as additional storage facilities in the interests of maintaining added resilience. There also are dedicated backup services that I have seen reviewed in the likes of PC Pro magazine but I have to make use of those.

Insync

Part of my process for dealing with new digital photo files is to back them up to Google Drive and I did that with a Windows client in the early days but then moved to Insync running on Linux Mint. One drawback to the approach is that this hogs the upload bandwidth of an internet connection that has yet to move to fibre from copper cabling. Having fibre connections to a local cabinet helps but a 100 KiB/s upload speed is easily overwhelmed and digital photo file sizes keep increasing. It does not help that I insist on using more flexible raw formats like DNG, CR2 or CR3 either.

Making fewer images could help to cut the load but I still come away from an excursion with many files because I get so besotted with my surroundings. This means that upload sessions take numerous hours and can extend across calendar days. Ultimately, this makes my internet connection far less usable so I want to throttle upload speed much like what is possible in the Transmission BitTorrent client or in the Dropbox client. Unfortunately, this is not available in Insync so I have tried using the trickle command instead and an example is below:

trickle -d 2000 -u 50 insync

Here, the upload speed is limited to 50 KiB/s while the download speed is limited to 2000 KiB/s. In my case, the latter of these hardly matters while the former leaves me with acceptable internet usability. Insync does not work smoothly with this, however, so occasional restarts are needed to keep file uploads progressing and CPU load also is higher. As rough as the user experience feels, uploads can continue in parallel with other work.

gdrive

One other option that I am exploring is the use of the command-line tool gdrive and this appears to work well with trickle. After downloading and installing the tool, getting going is a matter of issuing the following command and following the instructions:

gdrive about

On web servers, I even have the tool backing up things to Google Drive on a scheduled basis. Because of a Google Drive limitation that I have encountered not only with gdrive but also with Insync and Google’s own Windows Google Drive client, synchronisation only can happen with two new folders, one local and the other remote. Handily, gdrive supports the usual bash style commands for working with remote directories so something like the following will create a directory on Google Drive:

gdrive mkdir ttdc [ID for parent folder]

Here, the ID for the parent folder may be omitted but it can be obtained by going to Google Drive online and getting a link location by right-clicking on a folder and choosing the appropriate context menu item. This gets you something like the following and the required identifier is found between the last slash and the first question mark in the address string (so as not to share any real links, I made the address more general below):

https://drive.google.com/drive/folders/[remote folder ID]?usp=sharing

Then, synchronisation uses a command like the following:

gdrive sync upload [local folder or file path] [remote folder ID]

There also is the option to do a one-way upload and this is the form of the command used:

gdrive upload [local folder or file path] -p [remote folder ID]

Because every file or folder object has its own ID on Google Drive, it is possible to create two objects on there that appear to have the same name though that is sure to cause confusion even if you know what is happening. It is possible in each of the above to throttle them using trickle as well:

trickle -d 2000 -u 50 gdrive sync upload [local folder or file path] [remote folder ID]

trickle -d 2000 -u 50 gdrive upload [local folder or file path] -p [remote folder ID]

Handily, this works without the added drama seen with Insync and lends itself to scripting as well so it could be something that I will incorporate into my current workflow. One thing that needs to be watched is file upload failures but there may be ways to catch those and retry them so that would another thing that needs doing. This is built into Insync and it would be a learning opportunity if I was to stick with gdrive instead.

Rethinking photo editing

17th April 2018Photo editing has been something that I have been doing since my first-ever photo scan in 1998 (I believe it was in June of that year but cannot be completely sure nearly twenty years later). Since then, I have been using a variety of tools for the job and wondered how other photos can look better than my own. What cannot be excluded is my preference for being active in the middle of the day when light is at its bluest as well as a penchant for using a higher ISO of 400. In other words, what I do when making photos affects how they look afterwards as much as the weather that I had encountered.

My reason for mentioning the above aspects of photographic craft is that they affect what you can do in photo editing afterwards, even with the benefits of technological advancement. My tastes have changed over time, so the appeal of re-editing old photos fades when you realise that you only are going around in circles and there always are new ones to share, so that may be a better way to improve.

When I started, I was a user of Paint Shop Pro but have gone over to Adobe since then. First, it was Photoshop Elements, but an offer in 2011 lured me into having Lightroom and the full version of Photoshop. Nowadays, I am a Creative Cloud photography plan subscriber so I get to see new developments much sooner than once was the case.

Even though I have had Lightroom for all that time, I never really made full use of it and preferred a Photoshop-based workflow. Lightroom was used to select photos for Photoshop editing, mainly using adjustments for such things as tones, exposure, levels, hue and saturation. Removal of dust spots, resizing and sharpening were other parts of a still minimalist approach.

What changed all this was a day spent pottering about the 2018 Photography Show at the Birmingham NEC during a cold snap in March. That was followed by my checking out the Adobe YouTube Channel afterwards where there were videos of the talks featured every day of the four-day event. Here are some shortcuts if you want to do some catching up yourself: Day 1, Day 2, Day 3, and Day 4. Be warned though that these videos are long in that they feature the whole day and there are enough gaps that you may wish to fast-forward through them. Even so, there is quite a bit of variety of things to see.

Of particular interest were the talks given by the landscape photographer David Noton who sensibly has a philosophy of doing as little to his images as possible. It helps that his starting points are so good that adjusting black and white points with a little tonal adjustment does most of what he needs. Vibrancy, clarity and sharpening adjustments are kept to a minimum while some work with graduated filters evens out exposure differences between skies and landscapes. It helps that all this can be done in Lightroom, so that set me thinking about trying it out for size and the trick of using the backslash (\) key to switch between raw and processed views is a bonus granted by non-destructive editing. Others may have demonstrated the creation of composite imagery, but simplicity is more like my way of working.

Confusingly, we now have the cloud-based Lightroom CC while the previous desktop counterpart is known as Lightroom Classic CC. Though the former may allow for easy dust spot removal among other things, it is the latter that I prefer because the idea of wholesale image library upload does not appeal to me for now and I already have other places for off-site image backup like Google Drive and Dropbox. The mobile app does look interesting since it allows capturing images on a such a device in Adobe’s raw image format DNG. Still, my workflow is set to be more Lightroom-based than it once was and I quite fancy what new technology offers, especially since Adobe is progressing its Sensai artificial intelligence engine. The fact that it has access to many images on its systems due to Lightroom CC and its own stock library (Adobe Stock, formerly Fotolia) must mean that it has plenty of data for training this AI engine.

A display of brand loyalty

12th July 2013Since 2007, my main camera has been a Pentax K10D DSLR and it has gone on many journeys with me. In fact, more than 15,000 images have been captured with it and I have classed it as an unfailing servant. The autofocus may not be the fastest but my subjects tend to be stationary: landscapes, architecture, flora and transport. Even any bus and train photos have included parked vehicles rather than moving ones so there never have been issues. The hint of underexposure in any photos always can be sorted because DNG files are what I create, with all the raw capture information that is possible to retain. In fact, it has been hard to justify buying another SLR because the K10D has done so well for me.

In recent months, I have looking at processed photos and asking myself if time has moved along for what is not far from being a six year old camera. At various times, I have been looking at higher members of the Pentax while wondering if an upgrade would be a good idea. First, there was the K7 and then the K5 before the K5 II got launched. Even though its predecessor is still to be found on sale, it was the newer model that became my choice.

My move to Pentax in 2007 was a case of brand disloyalty since I had been a Canon user from when I acquired my first SLR, an EOS 300. Even now, I still have a Powershot G11 that finds itself slipped into a pocket on many a time. Nevertheless, I find that Canon images feel a little washed out prior to post processing and that hasn’t been the case with the K10D. In fact, I have been hearing good things about Nikon cameras delivering punchy results so one of them would be a contender were it not for how well the Pentax performed.

So, what has my new K5 II body gained me that I didn’t have before? For one thing, the autofocus is a major improvement on that in the K10D. It may not stop me persevering with manual focusing for most of the time but there are occasions the option of solid autofocus is good to have. Other advances include a 16.3 megapixel sensor with a much larger ISO range. The advances in sensor technology since when the K10D appeared may give me better quality photos and noise is something that my eyes may have begun to detect in K10D photos even at my usual ISO of 400.

There have been innovations that I don’t need too. Live View is something that I use heavily with the Powershot G11 because it has such a pitiful optical viewfinder. The K5 II has a very bright and sharp one so that function lays dormant, especially when I witnessed dodgy autofocus performance with it in use; manual focusing should be OK, I reckon. By default too, the screen stays on all the time and that’s a nuisance for an optical viewfinder user like me so I looked through the manual and the menus to switch off the thing. My brief flirtation with the image level display met an end for much the same reason though it’s good that it’s there. There is some horizon auto-correction available as a feature and this is left on to see what it offers since there have been a multitude of times when I needed to sort out crooked horizons caused by my handholding the camera.

The K5 II may have a 3″ screen on its back but it has done nothing to increase the size of the camera. If anything, it is smaller that the K10D and that usefully means that I am not on the lookout for a new camera holster. Not having a bigger body also means there is little change in how the much camera feels in the hand compared with the older one.

In many ways, the K5 II works very like the K10D once I took control over settings that didn’t suit me. Both have Shake Reduction in their camera bodies though the setting has been moved into the settings menu in the new camera when the older one had a separate switch on its body. Since I’d be inclined to leave it on all the time and prefer not to have it knocked off accidentally, this is not an issue. Otherwise, many of the various switches are in the same places so it’s not that hard to find my way around them.

That’s not to say that there aren’t other changes like the addition of a lock to the mode dial but I have used Canon EOS camera bodies with that feature so I do not consider it a step backwards. The exposure compensation button has been moved to the top of the camera where I found it very easily and have been using perhaps more than on the K10D; it’s also something that I use on the G11 so the experimentation is being brought across to the K5 II now as well. Beside it, there’s a new ISO button so further experimentation can be attempted with that to see how it does.

If I have any criticism, it’s about the clutter of the menus on the K5 II. The long lists through you scrolled on the K10D have been replaced with a series of extra tabs so that on-screen scrolling is not needed as before. However, I reckon that this breaks up things too much and makes working through the settings look more foreboding to anyone who is not so technical in mindset. Nevertheless, settings such as the the type of file to capture are there and I continue to use RAW DNG files as is usual for me though JPEG and Pentax’s own RAW format also are there. For a while, I forgot to set the date, soon found out what I did and the situation was remedied. The same sort of thing applied to storing files in different folders according to the capture date. For my own reasons, I turned this off to put everything into a single PENTX directory to suit my own workflow. My latest discovery among the menus was the ability to add photographer and copyright holder information to the EXIF metadata attached to the image files created by the camera. With legislative proposals that dilute the automatic rights of copyright holders going through the U.K. parliament, this seems a very timely inclusion even if most would prefer that there was no change to copyright law.

Of course, the worth of any camera is in the images that it produces and I have been happy with what I have been getting so far. The bigger files mean less images fit on a memory card as before. Thankfully, SDHC card capacities have grown even if I don’t wish to machine gun my photography altogether. While out and about, I was surprised to apertures like F/14 and F/18 when I was more accustomed to a progression like F/11, F/13, F/16, F/19, F/22, etc. Most of those older values still are there though so there hasn’t been a complete break with convention. The same comment applies to shutter speeds where ones like 1/100 and 1/160 made there appearance where I might have expected just ones like 1/90, 1/125, 1/250 and so on. The extra possibilities, and that is what they are, do allow more flexibility I suppose and may even make it easier to make correct exposures though any judgement of correctness has to be in the eye of a photographer and not what a computer algorithm in a camera determines. For much of the time until now, I have stuck with an ISO of 400 apart from a little testing in a woodland area of an evening soon after the camera arrived.

Since the K5 II came my way a few months ago, I have been meaning to collect my thoughts on here and there has been a delay while I brought mu thinking to a sensible close.At one point, it felt like there was so much to say that the piece became larger in my mind that even what you have been reading now. After all, there are other things that I can adjust to see how the resulting images look and white balance is but one of these.The K10D isn’t beyond experimentation either, especially since I discovered that shake reduction was switched off and it has me asking if that lacking in quality that I mentioned earlier has another explanation. Of course, actually making use of my tripod would be another good suggestion so it’s safe to say that yet more photographic explorations await.

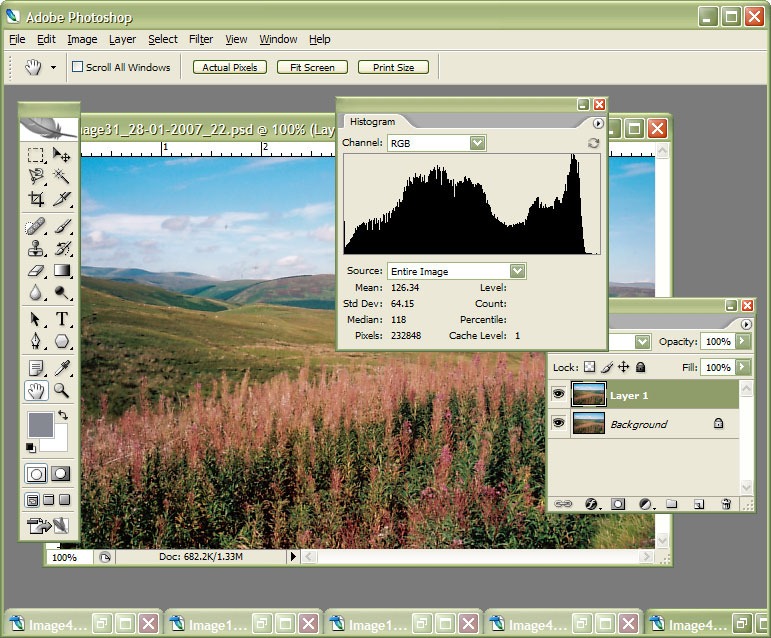

Photoshop CS2 workout

8th March 2007

I am in the process of adding new photos to my online photo gallery at the moment and the exercise is giving my Photoshop CS2 trial version a good amount of use. And the experience also adding a few strings to my bow in graphics editing terms, something that is being helped along by the useful volume that is The Focal Easy Guide to Photoshop CS2.

The most significant change that has happened is that to my workflow. Previously, it took the following form:

- Acquire image from scanner/camera

- For a camera image, do some exposure compensation

- Create copy of image in software’s own native file format (PSPIMAGE/PSP for Paint Shop Pro and PSD/PSB for Photoshop)

- Clean up image with clone stamp tool: removes scanner artefacts or sensor dust from camera images; I really must get my EOS 10D cleaned (the forecast for the coming weekend is hardly brilliant to I might try sending it away).

- Save a new version of the image following clean-up.

- Reduce the size of the digital camera image to 600×400 and create a new file.

- Boost colours of original image with hue/saturation/lightness control; save new version of file.

- Sharpen the image and save another version.

- For web images, save a new file with a descriptive name

- Create JPEG version

- Copy JPEG to Apache web server folders

- Create thumbnail from original JPEG

The new workflow is based upon this:

- Acquire image from scanner/camera

- For a camera image, do some exposure compensation; there is a lot of pre-processing that you can do in Camera Raw

- Create copy of image in software’s own native file format

- Clean up the image with the clone stamp tool and create a new file with _cleaned as its filename suffix. I tried the spot healing brush but didn’t seem to have that much success with it. Maybe I need to try again…

- Add adjustment layer for level correction and save file with _level suffix in its name.

- Add adjustment layer for curves correction

- Add adjustment layer for boosting colours with hue/saturation/lightness control

- Flatten layers and save new image with _flatten suffix in its name

- Sharpen flattened image and create a new version with _sharpened suffix in its name

- For web images, save a new file with a descriptive name

- Create JPEG versions in Apache web server folders; carry out any resizing using bi-cubic sharpening at this point.

Some improvements remain. For instance, separation of raw, intermediate and final photos by storing them in different directories is perhaps one possibility that I should consider. But there are other editing tricks that I have yet to use as well: merged and blended layers. Bi-cubic smoothing for expanding images is another possibility but it is one that requires a certain amount of caution. And I am certain that I will encounter others as I make my way through my reading.