TOPIC: MACHINE LEARNING

When Operations and Machine Learning meet

Here's a scenario you'll recognise: your SRE team drowns in 1,000 alerts daily. 95% are false positives. Meanwhile, your data scientists built five ML models last quarter, and none have reached production. These problems are colliding, and solving each other. Machine learning is moving out of research labs and into the operations that keep your systems running. At the same time, DevOps practices are being adapted to get ML models into production reliably. Since this convergence has created three new disciplines (AIOps, MLOps and LLM observability), here is what you need to know.

Why Traditional Operations Can't Keep Up

Modern systems generate unprecedented volumes of operational data. Logs, metrics, traces, events and user interaction signals create a continuous stream that's too large and too fast for manual analysis.

Your monitoring system might send thousands of alerts per day, but most are noise. A CPU spike in one microservice cascades into downstream latency warnings, database connection errors and end-user timeouts, generating dozens or hundreds of alerts from a single root cause. Without intelligent correlation, engineers waste hours manually connecting the dots.

Meanwhile, machine learning models that could solve real business problems sit in notebooks, never making it to production. The gap between data science and operations is costly. Data scientists lack the infrastructure to deploy models reliably. Operations teams lack the tooling to monitor models that do make it live.

The complexity of cloud-native architectures, microservices and distributed systems has outpaced traditional approaches. Manual processes that worked for simpler systems simply cannot scale.

Three Emerging Practices Changing the Game

Three distinct but related practices have emerged to address these challenges. Each solves a specific problem whilst contributing to a broader transformation in how organisations build and run digital services.

AIOps: Intelligence for Your Operations

AIOps (Artificial Intelligence for IT Operations) applies machine learning to the work of IT operations. Originally coined by Gartner, AIOps platforms collect data from across your environment, analyse it in real-time and surface patterns, anomalies or likely incidents.

The key capability is event correlation. Instead of presenting 1,000 raw alerts, AIOps systems analyse metadata, timing, topological dependencies and historical patterns to collapse related events into a single coherent incident. What was 1,000 alerts becomes one actionable event with a causal chain attached.

Beyond detection, AIOps platforms can trigger automated responses to common problems, reducing time to remediation. Because they learn from historical data, they can offer predictive insights that shift operations away from constant firefighting.

Teams implementing AIOps report measurable improvements: 60-80% reduction in alert volume, 50-70% faster incident response and significant reductions in operational toil. The technology is maturing rapidly, with Gartner predicting that 60% of large enterprises will have adopted AIOps platforms by 2026.

MLOps: Getting Models into Production

Whilst AIOps uses ML to improve operations, MLOps (Machine Learning Operations) is about operationalising machine learning itself. Building a model is only a small part of making it useful. Models change, data changes, and performance degrades over time if the system isn't maintained.

MLOps is an engineering culture and practice that unifies ML development and ML operations. It extends DevOps by treating machine learning models and data assets as first-class citizens within the delivery lifecycle.

In practice, this means continuous integration and continuous delivery for machine learning. Changes to models and pipelines are tested and deployed in a controlled way. Model versioning tracks not just the model artefact, but also the datasets and hyperparameters that produced it. Monitoring in production watches for performance drift and decides when to retrain or roll back.

The MLOps market was valued at $2.2 billion in 2024 and is projected to reach $16.6 billion by 2030, reflecting rapid adoption across industries. Organisations that successfully implement MLOps report that up to 88% of ML initiatives that previously failed to reach production are now being deployed successfully.

A typical MLOps implementation looks like this: data scientists work in their preferred tools, but when they're ready to deploy, the model goes through automated testing, gets versioned alongside its training data and deploys with built-in monitoring for performance drift. If the model degrades, it can automatically retrain or roll back.

The SRE Automation Opportunity

Site Reliability Engineering, originally created at Google, applies software engineering principles to operations problems. It encompasses availability, latency, performance, efficiency, change management, monitoring, emergency response and capacity planning. Rather than replacing AIOps, the likely outcome is convergence. Analytics, automation and reliability engineering become mutually reinforcing, with organisations adopting integrated approaches that combine intelligent monitoring, automated operations and proactive reliability practices.

What This Looks Like in the Real World

The difference between traditional operations and ML-powered operations shows up in everyday scenarios.

Before: An application starts responding slowly. Monitoring systems fire hundreds of alerts across different tools. An engineer spends two hours correlating logs, metrics and traces to identify that a database connection pool is exhausted. They manually scale the service, update documentation and hope to remember the fix next time.

After: The same slowdown triggers anomaly detection. The AIOps platform correlates signals across the stack, identifies the connection pool issue and surfaces it as a single incident with context. Either an automated remediation kicks in (scaling the pool based on learned patterns) or the engineer receives a notification with diagnosis complete and remediation steps suggested. Resolution time drops from hours to minutes.

Before: A data science team builds a pricing optimisation model. After three months of development, they hand a trained model to engineering. Engineering spends another month building deployment infrastructure, writing monitoring code and figuring out how to version the model. By the time it reaches production, the model is stale and performs poorly.

After: The same team works within an MLOps platform. Development happens in standard environments with experiment tracking. When ready, the data scientist triggers deployment through a single interface. The platform handles testing, versioning, deployment and monitoring. The model reaches production in days instead of months, and automatic retraining keeps it current.

These patterns extend across industries. Financial services firms use MLOps for fraud detection models that need continuous updating. E-commerce platforms use AIOps to manage complex microservices architectures. Healthcare organisations use both to ensure critical systems remain available whilst deploying diagnostic models safely.

The Tech Behind the Transformation (Optional Deep Dive)

If you want to understand why this convergence is happening now, it helps to know about transformers and vector embeddings. If you're more interested in implementation, skip to the next section.

The breakthrough that enabled modern AI came in 2017 with a paper titled "Attention Is All You Need". Ashish Vaswani and colleagues at Google introduced the transformer architecture, a neural network design that processes sequential data (like sentences) by computing relationships across the entire sequence at once, rather than step by step.

The key innovation is self-attention. Earlier models struggled with long sequences because they processed data sequentially and lost context. Self-attention allows a model to examine all parts of an input simultaneously, computing relationships between each token and every other token. This parallel processing is a major reason transformers scale well and perform strongly on large datasets.

Transformers underpin models like GPT and BERT. They enable applications from chatbots to content generation, code assistance to semantic search. For operations teams, transformer-based models power the natural language interfaces that let engineers query complex systems in plain English and the embedding models that enable semantic search across logs and documentation.

Vector embeddings represent concepts as dense vectors in high-dimensional space. Similar concepts have embeddings that are close together, whilst unrelated concepts are far apart. This lets models quantify meaning in a way that supports both understanding and generation.

In operations contexts, embeddings enable semantic search. Instead of searching logs for exact keyword matches, you can search for concepts. Query "authentication failures" and retrieve related events like "login rejected", "invalid credentials" or "session timeout", even if they don't contain your exact search terms.

Retrieval-Augmented Generation (RAG) combines these capabilities to make AI systems more accurate and current. A RAG system pairs a language model with a retrieval mechanism that fetches external information at query time. The model generates responses using both its internal knowledge and retrieved context.

This approach is particularly valuable for operations. A RAG-powered assistant can pull current runbook procedures, recent incident reports and configuration documentation to answer questions like "how do we handle database failover in the production environment?" with accurate, up-to-date information.

The technical stack supporting RAG implementations typically includes vector databases for similarity search. As of 2025, commonly deployed options include Pinecone, Milvus, Chroma, Faiss, Qdrant, Weaviate and several others, reflecting a fast-moving landscape that's becoming standard infrastructure for many AI implementations.

Where to Begin

Starting with ML-powered operations doesn't require a complete transformation. Begin with targeted improvements that address your most pressing problems.

If you're struggling with alert-fatigue...

Start with event correlation. Many AIOps platforms offer this as an entry point without requiring full platform adoption. Look for solutions that integrate with your existing monitoring tools and can demonstrate noise reduction in a proof of concept.

Focus on one high-volume service or team first. Success here provides both immediate relief and a template for broader rollout. Track metrics like alerts per day, time to acknowledge and time to resolution to demonstrate impact.

Tools worth considering include established platforms like Datadog, Dynatrace and ServiceNow, alongside newer entrants like PagerDuty AIOps and specialised incident response platforms like incident.io.

If you have ML models stuck in development...

Begin with MLOps fundamentals before investing in comprehensive platforms. Focus on model versioning first (track which code, data and hyperparameters produced each model). This single practice dramatically improves reproducibility and makes collaboration easier.

Next, automate deployment for one model. Choose a model that's already proven valuable but requires manual intervention to update. Build a pipeline that handles testing, deployment and basic monitoring. Use this as a template for other models.

Popular MLOps platforms include MLflow (open source), cloud provider offerings like AWS SageMaker, Google Vertex AI and Azure Machine Learning, and specialised platforms like Databricks and Weights & Biases.

If you're building with LLMs...

Implement observability from day one. LLM applications are different from traditional software. They're probabilistic, can be expensive to run, and their behaviour varies with prompts and context. You need to monitor performance (response times, throughput), quality (output consistency, appropriateness), bias, cost (token usage) and explainability.

Common pitfalls include underestimating costs, failing to implement proper prompt versioning, neglecting to monitor for model drift and not planning for the debugging challenges that come with non-deterministic systems.

The LLM observability space is evolving rapidly, with platforms like LangSmith, Arize AI, Honeycomb and others offering specialised tooling for monitoring generative AI applications in production.

Why This Matters Beyond the Tech

The convergence of ML and operations isn't just a technical shift. It requires cultural change, new skills and rethinking of traditional roles.

Teams need to understand not only deployment automation and infrastructure as code, but also concepts like attention mechanisms, vector embeddings and retrieval systems because these directly influence how AI-enabled services behave in production. They also need operational practices that can handle both deterministic systems and probabilistic ones, whilst maintaining reliability, compliance and cost control.

Data scientists are increasingly expected to understand production concerns like latency budgets, deployment strategies and operational monitoring. Operations engineers are expected to understand model behaviour, data drift and the basics of ML pipelines. The gap between these roles is narrowing.

Security and governance cannot be afterthoughts. As AI becomes embedded in tooling and operations become more automated, organisations need to integrate security testing throughout the development cycle, implement proper access controls and audit trails, and ensure models and automated systems operate within appropriate guardrails.

The organisations succeeding with these practices treat them as both a technical programme and an organisational transformation. They invest in training, establish cross-functional teams, create clear ownership and accountability, and build platforms that reduce cognitive load whilst enabling self-service.

Moving Forward

The convergence of machine learning and operations isn't a future trend, it's happening now. AIOps platforms are reducing alert noise and accelerating incident response. MLOps practices are getting models into production faster and keeping them performing well. The economic case for SRE automation is driving investment and innovation.

The organisations treating this as transformation rather than tooling adoption are seeing results: fewer outages, faster deployments, models that actually deliver value. They're not waiting for perfect solutions. They're starting with focused improvements, learning from what works and scaling gradually.

The question isn't whether to adopt these practices. It's whether you'll shape the change or scramble to catch up. Start with the problem that hurts most (alert fatigue, models stuck in development, reliability concerns) and build from there. The convergence of ML and operations offers practical solutions to real problems. The hard part is committing to the cultural and organisational changes that make the technology work.

Latest developments in the AI landscape: Consolidation, implementation and governance

Artificial intelligence is moving through another moment of consolidation and capability gain. New ways to connect models to everyday tools now sit alongside aggressive platform plays from the largest providers, a steady cadence of model upgrades, and a more defined conversation about risk and regulation. For companies trying to turn all this into practical value, the story is becoming less about chasing the latest benchmark and more about choosing a platform, building the right connective tissue, and governing data use with care. The coming year looks set to reward those who simplify the user experience, embed AI directly into work and adopt proportionate controls rather than blanket bans.

I. Market Structure and Competitive Dynamics

Platform Consolidation and Lock-In

Enterprise AI appears to be settling into a two-platform market. Analysts describe a landscape defined more by integration and distribution than raw model capability, evoking the cloud computing wars. On one side sit Microsoft and OpenAI, on the other Google and Gemini. Recent signals include the pricing of Gemini 3 Pro at around two dollars per million tokens, which undercuts much of the market, Alphabet's share price strength, and large enterprise deals for Gemini integrated with Google's wider software suite. Google is also promoting Antigravity, an agent-first development environment with browser control, asynchronous execution and multi-agent support, an attempt to replicate the pull of VS Code within an AI-native toolchain.

The implication for buyers is higher switching costs over time. Few expect true multi-cloud parity for AI, and regional splits will remain. Guidance from industry commentators is to prioritise integration across the existing estate rather than incremental model wins, since platform choices now look like decade-long commitments. Events lined up for next year are already pointing to that platform view.

Enterprise Infrastructure Alignment

A wider shift in software development is also taking shape. Forecasts for 2026 emphasise parallel, multi-agent systems where a planning agent orchestrates a set of execution agents, and harnesses tune themselves as they learn from context. There is growing adoption of a mix-of-models approach in which expensive frontier models handle planning, and cheaper models do the bulk of execution, bringing near-frontier quality for less money and with lower latency. Team structures are changing as a result, with more value placed on people who combine product sense with engineering craft and less on narrow specialisms.

ServiceNow and Microsoft have announced a partnership to coordinate AI agents across organisations with tighter oversight and governance, an attempt to avoid the sprawl that plagued earlier automation waves. Nvidia has previewed Apollo, a set of open AI physics models intended to bring real-time fidelity to simulations used in science and industry. Albania has appointed an AI minister, which has kicked off debate about how governments should manage and oversee their own AI use. CIOs are being urged to lead on agentic AI as systems become capable of automating end-to-end workflows rather than single steps.

New companies and partnerships signal where capital and talent are heading. Jeff Bezos has returned to co-lead Project Prometheus, a start-up with $6.2 billion raised and a team of about one hundred hires from major labs, focused on AI for engineering and manufacturing in the physical world, an aim that aligns with Blue Origin interests. Vik Bajaj is named as co-CEO.

Deals underline platform consolidation. Microsoft and Nvidia are investing up to $5 billion and $10 billion respectively (totalling $15 billion) in Anthropic, whilst Anthropic has committed $30 billion in Azure capacity purchases with plans to co-design chips with Nvidia.

Commercial Model Evolution

Events and product launches continue at pace. xAI has released Grok 4.1 with an emphasis on creativity and emotional intelligence while cutting hallucinations. On the tooling front, tutorials explain how ChatGPT's desktop app can record meetings for later summarisation. In a separate interview, DeepMind's Demis Hassabis set out how Gemini 3 edges out competitors in many reasoning and multimodal benchmarks, slightly trails Claude Sonnet 4.5 in coding, and is being positioned for foundations in healthcare and education though not as a medical-grade system. Google is encouraging developers towards Antigravity for agentic workflows.

Industry leaders are also sketching commercial models that assume more agentic behaviour, with Microsoft's Satya Nadella promising a "positive-sum" vision for AI while hinting at per-agent pricing and wider access to OpenAI IP under Microsoft's arrangements.

II. Technical Implementation and Capability

Practical Connectivity Over Capability

A growing number of organisations are starting with connectors that allow a model to read and write across systems such as Gmail, Notion, calendars, CRMs, and Slack. Delivered via the Model Context Protocol, these links pull the relevant context into a single chat, so users spend less time switching windows and more time deciding what to do. Typical gains are in hours saved each week, lower error rates, and quicker responses. With a few prompts, an assistant can draft executive email summaries, populate a Notion database with leads from scattered sources, or propose CRM follow-ups while showing its working.

The cleanest path is phased: enable one connector using OAuth, trial it in read-only mode, then add simple routines for briefs, meeting preparation or weekly reports before switching on write access with a "show changes before saving" step. Enterprise controls matter here. Connectors inherit user permissions via OAuth 2.0, process data in memory, and vendors point to SOC 2, GDPR and CCPA compliance alongside allow and block lists, policy management, and audit logs. Many governance teams prefer to begin read-only and require approvals for writes.

There are limits to note, including API rate caps, sync delays, context window constraints and timeouts for long workflows. They are poor fits for classified data, considerable bulk operations or transactions that cannot tolerate latency. Some industry observers regard Claude's current MCP implementation, particularly on desktop, as the most capable of the group. Playbooks for a 30-day rollout are beginning to circulate, as are practitioner workshops introducing go-to-market teams to these patterns.

Agentic Orchestration Entering Production

Practical comparisons suggest the surrounding tooling can matter more than the raw model for building production-ready software. One report set a 15-point specification across several environments and found that Claude Code produced all features end-to-end. The same spec built with Gemini 3 inside Antigravity delivered two thirds of the features, while Sonnet 4.5 in Antigravity delivered a little more than half, with omissions around batching, progress indicators and robust error handling.

Security remains a live issue. One newsletter reports that Anthropic said state-backed Chinese hackers misused Claude to autonomously support a large cyberattack, which has intensified calls for governance. The background hum continues, from a jump in voice AI adoption to a German ruling on lyric copyright involving OpenAI, new video guidance steps in Gemini, and an experimental "world model" called Marble. Tools such as Yorph are receiving attention for building agentic data pipelines as teams look to productionise these patterns.

Tooling Maturity Defining Outcomes

In engineering practice, Google's Code Wiki brings code-aware documentation that stays in sync with repositories using Gemini, supported by diagrams and interactive chat. GitLab's latest survey suggests AI increases code creation but also pushes up demand for skilled engineers alongside compliance and human oversight. In operations, Chronosphere has added AI remediation guidance to cut observability noise and speed root-cause analysis while performance testing is shifting towards predictive, continuous assurance rather than episodic tests.

Vertical Capability Gains

While the platform picture firms up, model and product updates continue at pace. Google has drawn attention with a striking upgrade to image generation, based on Gemini 3. The system produces 4K outputs with crisp text across multiple languages and fonts, can use up to 14 reference images, preserves identity, and taps Google Search to ground data for accurate infographics.

Separately, OpenAI has broadened ChatGPT Group Chats to as many as 20 people across all pricing tiers, with privacy protections that keep group content out of a user's personal memory. Consumer advocates have used the moment to call out the risks of AI toys, citing safety, privacy and developmental concerns, even as news continues to flow from research and product teams, from the release of OLMo 3 to mobile features from Perplexity and a partnership between Stability and Warner Music Group.

Anthropic has answered with Claude Opus 4.5, which it says is the first model to break the 80 percent mark on SWE-Bench Verified while improving tool use and reasoning. Opus 4.5 is designed to orchestrate its smaller Haiku models and arrives with a price cut of roughly two thirds compared to the 4.1 release. Product changes include unlimited chat length, a Claude Code desktop app, and integrations that reach across Chrome and Excel.

OpenAI's additions have a more consumer flavour, with a Shopping Research feature in ChatGPT that produces personalised product guidance using a GPT-5 mini variant and plans for an Instant Checkout flow. In government, a new US executive order has launched the "Genesis Mission" under the Department of Energy, aiming to fuse AI capabilities across 17 national labs for advances in fields such as biotechnology and energy.

Coding tools are evolving too. OpenAI has previewed GPT-5.1-Codex-Max, which supports long-running sessions by compacting conversational history to preserve context while reducing overhead. The company reports 30 percent fewer tokens and faster performance over sessions that can run for more than a day. The tool is already available in the Codex CLI and IDE, with an API promised.

Infrastructure news out of the Middle East points to large-scale investment, with Saudi HUMAIN announcing data centre plans including xAI's first international facility alongside chips from Nvidia and AWS, and a nationwide rollout of Grok. In computer vision, Meta has released SAM 3 and SAM 3D as open-source projects, extending segmentation and enabling single-photo 3D reconstruction, while other product rollouts continue from GPT-5.1 Pro availability to fresh funding for audio generation and a marketing tie-up between Adobe and Semrush.

On the image side, observers have noted syntax-aware code and text generation alongside moderation that appears looser than some rivals. A playful "refrigerator magnet" prompt reportedly revealed a portion of the system prompt, a reminder that prompt injection is not just a developer concern.

Video is another area where capabilities are translating into business impact. Sora 2 can generate cinematic, multi-shot videos with consistent characters from text or images, which lets teams accelerate marketing content, broaden A/B testing and cut the need for studios on many projects. Access paths now span web, mobile, desktop apps and an API, and the market has already produced third-party platforms that promise exports without watermarks.

Teams experimenting with Sora are being advised to measure success by outcomes such as conversion rates, lower support loads or improved lead quality rather than just aesthetic fidelity. Implementation advice favours clear intent, structured prompts and iterative variation, with more advanced workflows assembling multi-shot storyboards, using match cuts to maintain rhythm, controlling lighting for continuity and anchoring character consistency across scenes.

III. Governance, Risk and Regulation

Governance as a Product Requirement

Amid all this activity, data risk has become a central theme for AI leaders. One governance specialist has consolidated common problem patterns into the PROTECT framework, which offers a way to map and mitigate the most material risks.

The first concern is the use of public AI tools for work content, which raises the chance of leakage or unwanted training on proprietary data. The recommended answer combines user guidance, approved internal alternatives, and technical or legal controls such as data scanning and blocking.

A second pressure point is rogue internal projects that bypass review, create compliance blind spots and build up technical debt. Proportionate oversight is key, calibrated to data sensitivity and paired with streamlined governance, so teams are not incentivised to route around it.

Third-party vendors can be opportunistic with data, so due diligence and contractual clauses need to prevent cross-customer training and make expectations clear with templates and guidance.

Technical attacks are another strand, from prompt injection to data exfiltration or the misuse of agents. Layered defences help here, including input validation, prompt sanitisation, output filtering, monitoring, red-teaming, and strict limits on access and privilege.

Embedded assistants and meeting bots come with permission risks when they operate over shared drives and channels, and agentic systems can amplify exposure if left unchecked, so the advice is to enforce least-privilege access, start on low-risk data, and keep robust audit trails.

Compliance risks span privacy laws such as GDPR with their demands for a lawful basis, IP and copyright constraints, contractual obligations, and the AI Act's emphasis on data quality. Legal and compliance checks need to be embedded at data sourcing, model training and deployment, backed by targeted training.

Finally, cross-border restrictions matter. Transfers should be mapped across systems and sub-processors, with checks for Data Privacy Framework certification, standard contractual clauses where needed, and transfer impact assessments that take account of both GDPR and newer rules such as the US Bulk Data Transfer Rule.

Regulatory Pragmatism

Regulators are not standing still, either. In the European Commission has proposed amendments to the AI Act through a Digital Omnibus package as the trilogue process rolls on. Six changes are in focus:

- High-risk timelines would be tied to the approval of standards, with a backstop of December 2027 for Annex III systems and August 2028 for Annex I products if delays continue, though the original August 2026 date still holds otherwise.

- Transparency rules on AI-detectable outputs under Article 50(2) would be delayed to February 2027 for systems placed on the market before August 2026, with no delay for newer systems.

- The plan removes the need to register Annex III systems in the public database where providers have documented under Article 6(3) that a system is not high risk.

- AI literacy would shift from a mandatory organisation-wide requirement to encouragement, except where oversight of high-risk systems demands it.

- There is also a move to centralise supervision by the AI Office for systems built on general-purpose models by the same provider, and for huge online platforms and search engines, which is intended to reduce fragmentation across member states.

- Finally, proportionality measures would define Small Mid-Cap companies and extend simplified obligations and penalty caps that currently apply to SMEs.

If adopted, the package would grant more time and reduce administrative load in some areas, at the expense of certainty and public transparency.

IV. Strategic Implications

The picture that emerges is one of pragmatic integration. Connectors make it feasible to keep work inside a single chat while drawing on the systems people already use. Platform choices are converging, so it makes sense to optimise for the suite that fits the current stack and to plan for switching costs that accumulate over time.

Agentic orchestration is moving from slides to code, but teams will get further by focusing on reliable tooling, clear governance and value measures that match business goals. Regulation is edging towards more flexible timelines and centralised oversight in places, which may lower administrative load without removing the need for discipline.

The sensible posture is measured experimentation: start with read-only access to lower-risk data, design routines that remove drudgery, introduce write operations with approvals, and monitor what is actually changing. The tools are improving quickly, yet the organisations that benefit most will be those that match innovation with proportionate controls and make thoughtful choices now that will hold their shape for the decade ahead.

AI infrastructure under pressure: Outages, power demands and the race for resilience

The past few weeks brought a clear message from across the AI landscape: adoption is racing ahead, while the underlying infrastructure is working hard to keep up. A pair of major cloud outages in October offered a stark stress test, exposing just how deeply AI has become woven into daily services.

At the same time, there were significant shifts in hardware strategy, a wave of new tools for developers and creators and a changing playbook for how information is found online. There is progress on resilience and efficiency, yet the system is still bending under demand. Understanding where it held, where it creaked and where it is being reinforced sets the scene for what comes next.

Infrastructure Stress and Outages

The outages dominated early discussion. An AWS incident that lasted around 15 hours and disrupted more than a thousand services was followed nine days later by a global Azure failure. Each cascaded across systems that depend on them, illustrating how AI now amplifies the consequences of platform problems.

This was less about a single point of failure and more about the growing blast radius when connected services falter. The effect on productivity was visible too: a separate 10-hour ChatGPT downtime showed how fast outages of core AI tools now translate into lost work time.

Power Demand and Grid Strain

Behind the headlines sits a larger story about electricity, grids and planning. Data centres accounted for roughly 4% of US electricity use in 2024, about 183 TWh and the International Energy Agency projects around 945 TWh by 2030, with AI as a principal driver.

The averages conceal stark local effects. Wholesale prices near dense clusters have spiked by as much as 267% at times, household bills are rising by about $16–$18 per month in affected areas and capacity prices in the PJM market jumped from $28.92 per megawatt to $329.17. The US grid faces an upgrade bill of about $720 billion by 2030, yet permitting and build timelines are long, creating a bottleneck just as demand accelerates.

Technical Grid Issues

Technical realities on the grid add another layer of challenge. Fast load swings from AI clusters, harmonic distortions and degraded power quality are no longer theoretical concerns. A Virginia incident in which 60 data centres disconnected simultaneously did not trigger a collapse but did reveal the fragility introduced by concentrated high-performance compute.

Security and New Failure Modes

Security risks are evolving in parallel. Agentic systems that can plan, reason and call tools open new failure modes. AI-enabled spear phishing appears to be 350% more effective than traditional attempts and could be 50 times more profitable, a worrying backdrop when outages already have a clear link to lost productivity.

Security considerations now reach into the tools people use to access AI as well. New AI browsers attract attention, and with that comes scrutiny. OpenAI's Atlas and Perplexity's Comet launched with promising features, yet researchers flagged critical issues.

Comet is vulnerable to "CometJacking", a malicious URL hijack that enables data theft, while Atlas suffered a cross-site request forgery weakness that allowed persistent code injection into ChatGPT memory. Both products have been noted for assertive data collection.

Caution and good hygiene are prudent until the fixes and policies settle. It is a reminder that the convenience of integrating models directly into browsing comes with a new attack surface.

Efficiency and Mitigation Strategies

Industry responses are gathering pace. Efficiency remains the first lever. Hyperscalers now report power usage effectiveness around 1.08 to 1.09, compared with more typical figures of 1.5 to 1.6. Direct chip cooling can cut energy needs by up to 40%.

Grid-interactive operations and more work at the edge offer ways to smooth demand and reduce concentration risk, while new power partnerships hint at longer-term change. Microsoft's agreement with Constellation on nuclear power is one example of how compute providers are thinking beyond incremental efficiency gains.

An emerging pattern is becoming visible through these efforts. Proactive regional planning and rapid efficiency improvements could allow computational output to grow by an order of magnitude, while power use merely doubles. More distributed architectures are being explored to reduce the hazard of over-concentration.

A realistic outlook sets data centres at around 3% of global electricity use by 2030, which is notable but still smaller than anticipated growth from electric vehicles or air conditioning. If the $720 billion in grid investment materialises, it could add around 120 GW of capacity by 2030, as much as half of which would be absorbed by data centres. The resilience gap is real, but it appears to be narrowing, provided the sector moves quickly to apply lessons from each failure.

Regional and Policy Responses

Regional policies are starting to encourage resilience too. Oregon's POWER Act asks operators to contribute to grid robustness, Singapore's tight focus on efficiency has delivered around a 30% power reduction even as capacity expands and a moratorium in Dublin has pushed growth into more distributed build-outs. On the U.S. federal government side, the Department of Homeland Security updated frameworks after a 2024 watchdog warning, with AI risk programmes now in place for 15 of the 16 critical infrastructure sectors.

Hardware Competition and Strategy

Competition is sharpening. Anthropic deepened its partnership with Google Cloud to train on TPUs, a move that challenges Nvidia's dominance and signals a broader rebalancing in AI hardware. Nvidia's chief executive has acknowledged TPUs as robust competition.

Another fresh entry came from Extropic, which unveiled thermodynamic sampling units, a probabilistic chip design that claims up to 10,000-fold lower energy use than GPUs for AI workloads. Development kits are shipping and a Z-1 chip is planned for next year, yet as with any radical architecture, proof at scale will take time.

Nvidia, meanwhile, presented an ambitious outlook, targeting $500 billion in chip revenue by 2026 through its Blackwell and Rubin lines. The US Department of Energy plans seven supercomputers comprising more than 100,000 Blackwell GPUs and the company announced partnerships spanning pharmaceuticals, industrials and consumer platforms.

A $1 billion investment in Nokia hints at the importance of AI-centric networks. New open-source models and datasets accompanied the announcements, and the company's share price surged to a record.

Corporate Restructuring

Corporate strategy and hardware choices also entered a new phase. OpenAI completed its restructuring into a public benefit corporation, with a rebranded OpenAI Foundation holding around $130 billion in equity and allocating $25 billion to health and AI resilience. Microsoft's stake now sits at about 27% and is worth roughly $135 billion, with technology rights retained through 2032. Both parties have scope to work with other partners. OpenAI committed around $250 billion to Azure yet retains the ability to use other compute providers. An independent panel will verify claims of artificial general intelligence, an unusual governance step that will be watched closely.

Search and Discovery Evolution

Away from infrastructure, the way audiences find and trust information is shifting. Search is moving from the old aim of ranking for clicks to answer engine optimisation, where the goal is to be quoted by systems such as ChatGPT, Claude or Perplexity.

The numbers explain why. Google handled more than five trillion queries in 2024, while generative platforms now process around 37.5 million prompt-like searches per day. Google's AI Overviews, which surface summary answers above organic results, have reshaped click behaviour.

Independent analyses report top-ranking pages seeing click-through rates fall by roughly a third where Overviews appear, with some keywords faring worse, and a Pew study finds overall clicks on such results dropping from 15% to 8%. Zero-click searches rose from around 56% to 69% between May 2024 and May 2025.

Chegg's non-subscriber traffic fell by 49% in this period, part of an ongoing dispute with Google. Google counters that total engagement in covered queries has risen by about 10%. Whichever way that one reads the data, the direction is clear: visibility is less about rank position and more about being cited by a summarising engine.

In practice, that means structuring content, so a model can parse, trust and attribute it. Clear Q&A-style sections with direct answers, followed by context and cited evidence, help models extract usable statements. Schema markup for FAQs and how-to content improves machine readability.

Measuring success also changes. Traditional analytics rarely show when an LLM quotes a source, so teams are turning to tools that track citations in AI outputs and tying those to conversion quality, branded search volume and more in-depth engagement with pricing or documentation. It is not a replacement for SEO so much as a layer that reinforces it in an AI-first environment.

Developer Tools and Agentic Workflows

On the tools front, developers saw an acceleration in agent-centred workflows. Cursor launched its first in-house coding model, Composer, which aims for near-frontier quality while generating code around four times faster, often in under 30 seconds.

The broader Cursor 2.0 update added multi-agent capabilities, with as many as eight assistants able to work in parallel, alongside browsing, a test browser and voice controls. The direction of travel is away from single-shot completions and towards orchestration and review. Tutorials are following suit, demonstrating how to scaffold tasks such as a Next.js to-do application using planning files, parallel agent tasks and quick integration, with voice prompts in the loop.

Open-source and enterprise ecosystems continue to expand. GitHub introduced Agent HQ for coordinating coding agents, Google released Pomelli to generate marketing campaigns and IBM's Granite 4.0 Nano models brought larger on-device options in the 350 million to 1.5 billion parameter range.

FlowithOS reported strong scores on agentic web tasks, while Mozilla announced an open speech dataset initiative, and Kilo Code, Hailuo 2.3 and other projects broadened choice across coding and video. Grammarly rebranded as Superhuman, adding "Superhuman Go" agents to speed up writing tasks.

Creative Tools and Partnerships

Creative workflows are evolving quickly, too. Adobe used its MAX event to add AI assistants to Photoshop and Express, previewed an agent called Project Moonlight, and upgraded Firefly with conversational "Prompt to Edit" controls, custom image models and new video features including soundtracks and voiceovers. Partnerships mean Gemini, Veo and Imagen will sit inside Adobe tools, and Premiere's editing capabilities now extend to YouTube Shorts.

Figma acquired Weavy and rebranded it as Figma Weave for richer creative collaboration, and Canva unveiled its own foundation "Design Model" alongside a Creative Operating System meant to produce fully editable, AI-generated designs. New Canva features take in a revised video suite, forms, data connectors, email design, a 3D generator and an ad creation and performance tool called Grow, while Affinity is relaunching as a free, integrated professional app. Other entrants are trying to blend model strengths: one agent was trailed with Sora 2 clip stitching, Veo 3.1 visuals and multimodel blending for faster design output.

Music rights and AI found a new footing. Universal Music Group settled a lawsuit with Udio, the AI music generator, and the two will form a joint venture to launch a licensed platform in 2026. Artists who opt in will be paid both for training models on their catalogues and for remixes. Udio disabled song downloads following the deal, which annoyed some users, and UMG also announced a "responsible AI" alliance with Stability AI to build tools for artists. These arrangements suggest a path towards sanctioned use of style and catalogue, with compensation built in from the start.

Research and Introspection

Research and science updates added depth. Anthropic reported that its Claude system shows limited introspection, detecting planted concepts only about 20% of the time, separating injected "thoughts" from text and modulating its internal focus. That highlights both the promise and limits of transparency techniques, and the potential for models to conceal or fail to surface certain internal states.

UC Berkeley researchers demonstrated an AI-driven load balancing algorithm with around 30% efficiency improvements, a result that could ripple through cloud performance. IBM ran quantum algorithms on AMD FPGAs, pointing to progress in hybrid quantum-classical systems.

OpenAI launched an AI-integrated web browser positioned as a challenger to incumbents, Perplexity released a natural-language patents search and OpenAI's Aardvark, a GPT-5-based security agent, entered private beta.

Anthropic opened a Tokyo office and signed a cooperation pact with Japan's AI Safety Institute. Tether released QVAC Genesis I, a large open STEM dataset of more than one million data points and a local workbench app aimed at making development more private and less dependent on big platforms.

Age Restrictions and Policy

Meanwhile, policy considerations are reaching consumer platforms. Character AI will restrict users under 18 from open-ended chatbot conversations from late November, replacing them with creative tools and adding behaviour-based age detection, a response to pressure and proposals such as the GUARD Act.

Takeaways

Put together, the picture is one of rapid interdependence and swift correction. The infrastructure is not breaking, but it is being stretched, and recent failures have usefully mapped the weak points. If the sector continues to learn quickly from its own missteps, the resilience gap will continue to narrow, and the next round of outages will be less disruptive than the last.

Investment is flowing into grids and cooling, policy is nudging towards resilience, and compute providers are hedging hardware bets by searching for efficiency and supply assurance. On the application layer, agents are becoming a primary interface for work, creative tools are converging around editability and control, and discovery is shifting towards being quoted by machines rather than clicked by humans.

Security lapses at the interface are a reminder that novelty often arrives before maturity. The most likely path from here is uneven but forward: data centre power may rise, yet efficiency and distribution can blunt the impact; answer engines may compress clicks, yet they can send higher intent visitors to clear, well-structured sources; hardware competition may fragment the stack, yet it can also reduce concentration risk.

Some Data Science newsletters that may be worth your time

Staying informed about developments in data science and artificial intelligence without drowning in an endless stream of blog posts, research papers and tool announcements presents a genuine challenge for practitioners. The newsletters profiled below offer a solution to this problem by delivering curated digests at weekly or near-weekly intervals, filtering what matters from the constant flow of new content across the field. Each publication serves a distinct purpose, from broad data science coverage and community event notifications to AI business strategy and statistical foundations, allowing readers to select resources that match their specific interests, whether technical depth, practical application, career development or strategic awareness. What follows examines what each newsletter offers, who benefits most from subscribing, and what limitations or trade-offs readers should consider when choosing which digests merit a place in their inbox.

Launched in 2014 by Lon Reisberg, this newsletter distinguishes itself through expert curation with minimal hype. It maintains strong editorial consistency and neutrality, presenting a handful of carefully selected articles that genuinely matter rather than overwhelming subscribers with dozens of links. The free version delivers this curated digest, whilst the Pro tier (fifty dollars annually) offers searchable archives spanning over 250 issues back to 2019, plus AI-powered learning tools including a SQL tutor and interview coach. The newsletter's defining characteristic is its quality-over-quantity approach, serving professionals who trust expert curation to surface what is genuinely important without the noise and hype that characterises many industry publications.

Data Science Weekly Newsletter

One of the oldest independent data science newsletters, having published over 400 issues since 2014, this publication sets itself apart through longevity and unwavering consistency. It delivers every Thursday without fail, maintaining a simple, distraction-free format with no over-commercialisation or fluff. Its unique value lies in this dependability, with subscribers knowing exactly what to expect each week, making it a practical baseline for staying current without surprises or dramatic shifts in editorial direction.

Unlike newsletters that simply curate external content, this publication builds its own ecosystem of learning resources, offering something fundamentally different through its open, community-driven approach. It combines free courses (Zoomcamps), events and a supportive Slack community, with all materials publicly available on GitHub. The newsletter keeps members informed about upcoming cohorts, webinars and talks within this collaborative environment. The defining feature is its entirely open and peer-supported approach, where readers gain access not just to information, but to hands-on learning opportunities and a community of practitioners willing to help each other grow.

Founded in 1997 by Gregory Piatetsky-Shapiro, this publication stands apart through industry authority spanning nearly three decades. It holds unmatched credibility through its longevity and comprehensive coverage, known for its annual software polls, data science career resources and balanced mix of expert articles, surveys and tool trends that appeal equally to technical practitioners and managers seeking a global overview of the field. What sets it apart is this authoritative position, with few publications able to match its track record or breadth of influence across both technical and strategic aspects of data science and AI.

Connected to the Open Data Science Conference network, this newsletter distinguishes itself as the gateway to the global data science event ecosystem. It serves as the practitioner's bridge to events, training, webinars and conferences worldwide. It covers the full stack, from tutorials and research to business use cases and career advice, but its distinctive strength lies in connecting readers to the broader data science community through live events and practical learning opportunities. The defining characteristic is this conference-linked, community-rich approach, proving especially valuable for professionals who want to remain active participants in the field rather than passive consumers of content.

Maintaining a unique position by focusing entirely on statistical foundations, Whilst most data science newsletters chase the latest AI developments, it maintains an unwavering focus on statistics and foundational analysis, providing step-by-step tutorials for Excel, R and Python that emphasise statistical intuition over trendy techniques. This singular focus on fundamentals makes it unique, serving as an essential complement to AI-focused newsletters and helping readers build the statistical knowledge base that underpins sound data science practice.

Created by the makers of KDnuggets, this digital newsletter and media platform carves out a distinctive niche with business-focused AI news for non-technical leaders. It curates AI developments specifically for executives and decision-makers, emphasising practical, non-technical insights about tools, regulations and market moves, backed by an AI tool database and a claimed community of over 400,000 subscribers. What sets it apart is this strategic, implementation-focused perspective, concentrating on what AI means for business strategy rather than explaining how AI works, making it accessible to leaders without deep technical backgrounds.

Published weekly by DeepLearning.AI, co-founded by Andrew Ng, this newsletter offers trusted commentary that combines AI news with insightful analysis. Written by leading experts, it provides a balanced view that merges academic grounding with applied, real-world context. The distinguishing feature is this authoritative perspective on implications, helping engineers, product teams and business leaders understand why developments matter and how to think about their practical impact rather than simply reporting what happened.

An AI email newsletter roundup: Cutting through the noise

This time last year, I felt out of the loop on all things AI. That was put to rights during the autumn when I experimented a lot with GenAI while enhancing travel content on another portal. In addition, I subscribed to enough email newsletters that I feel the need to cull them at this point. Maybe I should use a service like Kill the Newsletter to consolidate things into an RSS feed instead; that sounds like an interesting option for dealing with any overload.

So much is happening in this area that it is too easy to feel overwhelmed by what is happening. That sense got me compiling the state of things in a previous post using some help from GenAI, though I was making the decisions about what was being consolidated and how it was being done. The whole process took a few hours, an effort clearly beyond a single button push.

This survey is somewhat eclectic in its scope; two of the newsletters are hefty items, while others include brevity as part of their offer. Regarding the latter, I found strident criticism of some of them (The Rundown and Superhuman are two that are mentioned) in an article published in the Financial Times, which is behind a paywall. Their content has been called slop, with the phrase slopaganda being coined and used to describe this. That cannot be applied everywhere, though. Any brevity cannot cloak differences in tone and content choices can help with developing a more rounded view of what is going on with AI.

This newsletter came to my notice because I attended SAS Innovate on Tour 2025 in London last June. Oliver Patel, who authors this and serves as Enterprise AI Governance Lead at AstraZeneca as well as contributing to various international organisations including the OECD Expert Group on AI Risk and Accountability, was a speaker with the theme of his talk naturally being AI governance as well as participating in an earlier panel on the day. Unsurprisingly, the newsletter also got a mention.

It provides in-depth practical guidance on artificial intelligence governance and risk management for professionals working in enterprise environments, though not without a focus on scaling governance frameworks across organisations. Actionable insights are emphasised in place of theoretical concepts, covering areas such as governance maturity models that progress from nascent stages through to transformative governance, implementation strategies and leadership approaches needed to drive effective AI governance within companies.

Patel brings experience from roles spanning policy work, academia and privacy sectors, including positions with the UK government and University College London, which informs his practical approach to helping organisations develop robust AI governance structures. The newsletter targets AI governance professionals, risk managers and executives who need clear, scalable solutions for real-world implementation challenges, and all content remains freely accessible to subscribers.

Unlike other newsletters featured here, this is a seven-day publication that delivers a five‑minute digest on AI industry happenings each day that combines news, productivity tips, polls and AI‑generated art. It was launched in June 2023 by Matt Village and Adam Biddlecombe, using of beehiiv’s content‑focused platform that was acquired by HubSpot in March 2025, placing it within the HubSpot Media Network.

Created by Zain Kahn and based in Toronto, weekday issues of this newsletter typically follow a structured format featuring three AI tools for productivity enhancement, two significant AI developments and one quick tutorial to develop practical skills. On Saturdays, there is a round-up on what is happening in robotics, while the Sunday issue centres on developments in science. Everything is crafted to be brief, possibly allowing a three-minute survey of latest developments.

The Artificially Intelligent Enterprise

My interest in the world of DevOps led me to find out about Mark Hinkle, the solopreneur behind Peripety Labs and his in-depth weekly newsletter published every Friday that features comprehensive deep dives into strategic trends and emerging technologies. This has been complemented by a shorter how-to version which focusses on concrete AI lessons and implementation tips and comes out every Tuesday, taking forward a newsletter acquired from elsewhere. The idea is that we should concentrate on concrete AI lessons and implementation tips in place of hype, particularly in business settings. These forms part of The AIE Network alongside complementary publications including AI Tangle, AI CIO and AI Marketing Advantage.

Found though my following the Artificially Intelligent Enterprise, this daily newsletter delivers artificial intelligence developments and insights within approximately five minutes of reading time per issue. Published by Rowan Cheung, it covers key AI developments, practical guides and tool recommendations, with some articles spanning technology and robotics categories. Beyond the core newsletter, the platform operates AI University, which provides certificate courses, implementation guides, expert-led workshops and community networking opportunities for early adopters.

From boardroom to code: More options for AI and Data Science education

The artificial intelligence revolution has created an unprecedented demand for education that spans from executive strategy to technical implementation. Modern professionals face the challenge of navigating a landscape where understanding AI's business implications proves as crucial as mastering its technical foundations. This comprehensive examination explores five distinguished programmes that collectively address this spectrum, offering pathways for business professionals, aspiring data scientists and technical specialists seeking advanced expertise.

Strategic Business Implementation Through Practical AI Tools

LinkedIn Learning's Applying Generative AI as a Business Professional programme represents the entry point for professionals seeking immediate workplace impact. This focused five-hour curriculum across six courses addresses the practical reality that most business professionals need functional AI literacy rather than technical mastery. The programme emphasises hands-on application of contemporary tools including ChatGPT, Claude and Microsoft Copilot, recognising that these platforms have become integral to modern professional workflows.

The curriculum's strength lies in its emphasis on prompt engineering techniques that yield immediate productivity gains. Participants learn to craft effective queries that consistently produce useful outputs, a skill that has rapidly evolved from novelty to necessity across industries. The programme extends beyond basic tool usage to include strategies for creating custom GPTs without programming knowledge, enabling professionals to develop solutions that address specific organisational challenges.

Communication enhancement represents another critical component, as the programme teaches participants to leverage AI for improving written correspondence, presentations and strategic communications. This practical focus acknowledges that AI's greatest business value often emerges through augmenting existing capabilities rather than replacing human expertise. The inclusion of critical thinking frameworks for AI-assisted decision-making ensures that participants develop sophisticated approaches to integrating artificial intelligence into complex business processes.

Academic Rigour Meets Strategic AI Governance

The University of Pennsylvania's AI for Business Specialisation on Coursera elevates business AI education to an academic level whilst maintaining practical relevance. This four-course programme, completed over approximately four weeks, addresses the strategic implementation challenges that organisations face when deploying AI technologies at scale. The curriculum's foundation in Big Data fundamentals provides essential context for understanding the data requirements that underpin successful AI initiatives.

The programme's exploration of machine learning applications in marketing and finance demonstrates how AI transforms traditional business functions. Participants examine customer journey optimisation techniques, fraud prevention methodologies and personalisation technologies that have become competitive necessities rather than optional enhancements. These applications receive thorough treatment that balances technical understanding with strategic implications, enabling participants to make informed decisions about AI investments and implementations.

Particularly valuable is the programme's emphasis on AI-driven people management practices, addressing how artificial intelligence reshapes human resources, talent development and organisational dynamics. This focus acknowledges that successful AI implementation requires more than technological competence; it demands sophisticated understanding of how these tools affect workplace relationships and employee development.

The specialisation's coverage of strategic AI governance frameworks proves especially relevant as organisations grapple with ethical deployment challenges. Participants develop comprehensive approaches to responsible AI implementation that address regulatory compliance, bias mitigation and stakeholder concerns. This academic treatment of AI ethics provides the foundational knowledge necessary for creating sustainable AI programmes that serve both business objectives and societal responsibilities.

Industry-Standard Professional Development

IBM's Data Science Professional Certificate represents a bridge between business understanding and technical proficiency, offering a comprehensive twelve-course programme designed for career transition. This four-month pathway requires no prior experience whilst building industry-ready capabilities that align with contemporary data science roles. The programme's strength lies in its integration of technical skill development with practical application, ensuring graduates possess both theoretical knowledge and hands-on competency.

The curriculum's progression from Python programming fundamentals through advanced machine learning techniques mirrors the learning journey that working data scientists experience. Participants gain proficiency with industry-standard tools including Jupyter notebooks, GitHub and Watson Studio, ensuring familiarity with the collaborative development environments that characterise modern data science practice. This tool proficiency proves essential for workplace integration, as contemporary data science roles require seamless collaboration across technical teams.

The programme's inclusion of generative AI applications reflects IBM's recognition that artificial intelligence has become integral to data science practice rather than a separate discipline. Participants learn to leverage AI tools for data analysis, visualisation and insight generation, developing capabilities that enhance productivity whilst maintaining analytical rigour. This integration prepares trainees for data science roles that increasingly incorporate AI-assisted workflows.

Real-world project development represents a crucial component, as participants build comprehensive portfolios that demonstrate practical proficiency to potential employers. These projects address authentic business challenges using genuine datasets, ensuring that participants can articulate their capabilities through concrete examples.

Advanced Technical Mastery Through Academic Excellence

Andrew Ng's Machine Learning Specialisation on Coursera establishes the technical foundation for advanced AI practice. This three-course programme, completed over approximately two months, provides comprehensive coverage of core machine learning concepts whilst emphasising practical implementation skills. Andrew Ng's reputation as an AI pioneer lends exceptional credibility to this curriculum, ensuring that participants receive instruction that reflects both academic rigour and industry best practices.

The specialisation's treatment of supervised learning encompasses linear and logistic regression, neural networks and decision trees, providing thorough grounding in the algorithms that underpin contemporary machine learning applications. Participants develop practical proficiency with Python, NumPy and scikit-learn, gaining hands-on experience with the tools that professional machine learning practitioners use daily. This implementation focus ensures that theoretical understanding translates into practical capability.

Unsupervised learning includes clustering algorithms, anomaly detection techniques and certain approaches in recommender systems, all of which contribute to powering modern digital experiences. The programme's exploration of reinforcement learning provides exposure to the techniques driving advances in autonomous systems and game-playing AI. This breadth ensures that participants understand the full spectrum of machine learning approaches, rather than developing narrow expertise in specific techniques.

Cutting-Edge Deep Learning Applications

Again available through Coursera, Andrew Ng's Deep Learning Specialisation extends technical education into the neural network architectures that drives contemporary AI. This five-course programme, spanning approximately three months, addresses the advanced techniques that enable computer vision, natural language processing and complex pattern recognition applications. The intermediate-level curriculum assumes foundational machine learning knowledge whilst building expertise in cutting-edge methodologies.

Convolutional neural network coverage provides comprehensive understanding of computer vision applications, from image classification through object detection and facial recognition. Participants develop practical skills with CNN architectures that power visual AI applications across industries. The programme's treatment of recurrent neural networks and LSTMs addresses sequence processing challenges in speech recognition, machine translation and time series analysis.

The specialisation's exploration of transformer architectures proves particularly relevant given their central role in large language models and natural language processing breakthroughs. Participants gain understanding of attention mechanisms, transfer learning techniques and the architectural innovations that enable modern AI capabilities. This coverage ensures they understand the technical foundations underlying contemporary AI advances.

Real-world application development represents a crucial component, as participants work on speech recognition systems, machine translation applications, image recognition tools and chatbot implementations. These projects utilise TensorFlow, a dominant framework for deep learning development, ensuring that graduates possess practical experience with production-ready tools.

Strategic Integration and Future Pathways

These five programmes collectively address the comprehensive skill requirements of the modern AI landscape, from strategic business implementation through advanced technical development. The progression from practical tool usage through academic business strategy to technical mastery reflects the reality that successful AI adoption requires capabilities across multiple domains. Organisations benefit most when business leaders understand AI's strategic implications, whilst technical teams possess sophisticated implementation capabilities.

The integration of business strategy with technical education acknowledges that artificial intelligence's transformative potential emerges through thoughtful application rather than technological sophistication alone. These programmes prepare professionals to contribute meaningfully to AI initiatives regardless of their specific role or technical background, ensuring that organisations can build comprehensive AI capabilities that serve both immediate needs and long-term strategic objectives.

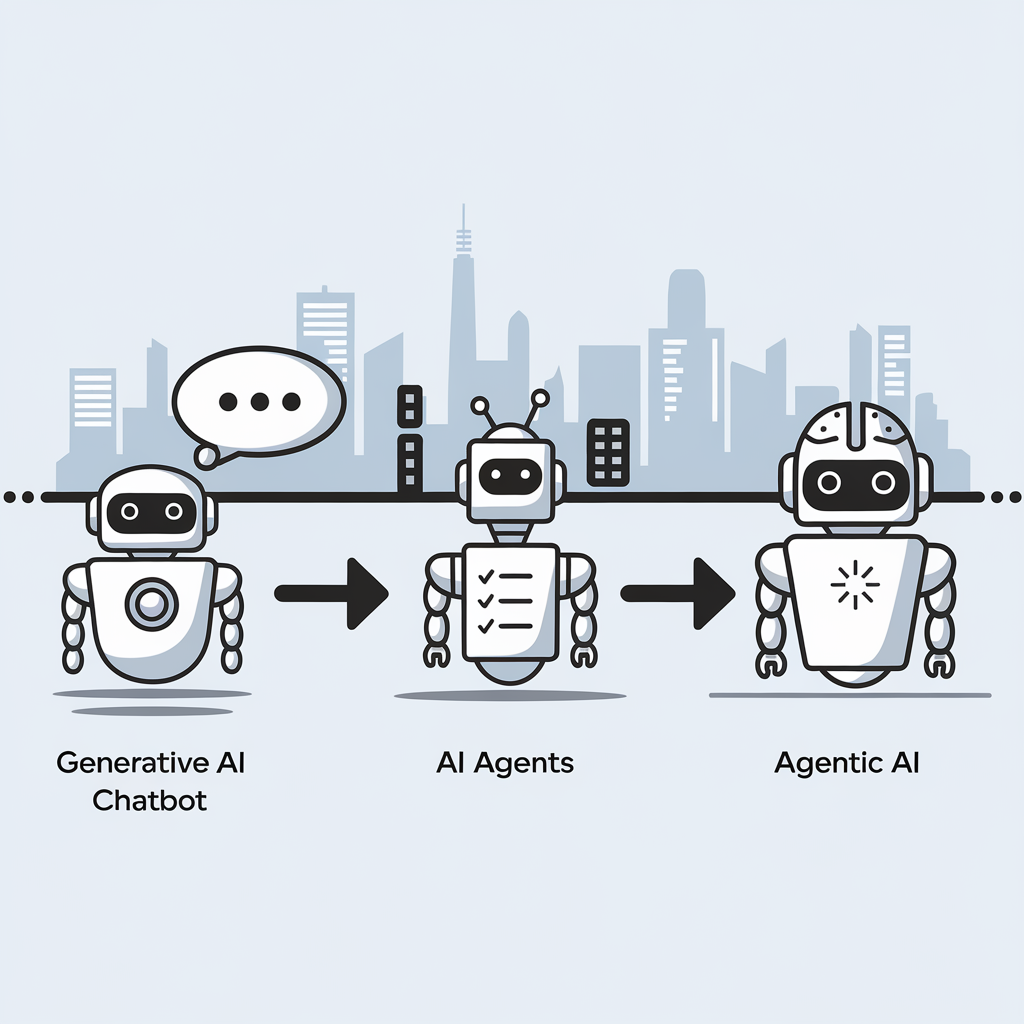

The critical differences between Generative AI, AI Agents, and Agentic Systems

The distinction between three key artificial intelligence concepts can be explained without technical jargon. Here then are the descriptions:

- Generative AI functions as a responsive assistant that creates content when prompted but lacks initiative, memory or goals. Examples include ChatGPT, Claude and GitHub Copilot.

- AI Agents represent a step forward, actively completing tasks by planning, using tools, interacting with APIs and working through processes independently with minimal supervision, similar to a junior colleague.

- Agentic AI represents the most sophisticated approach, possessing goals and memory while adapting to changing circumstances; it operates as a thinking system rather than a simple chatbot, capable of collaboration, self-improvement and autonomous operation.

This evolution marks a significant shift from building applications to designing autonomous workflows, with various frameworks currently being developed in this rapidly advancing field.

Claude Projects: Reusing your favourite AI prompts

Some things that I do with Anthropic Claude, I end up repeating. Generating titles for pieces of text or rewriting text to make it read better are activities that happen a lot. Others would include the generation of single word previews for a piece or creating a summary.

Python or R scripts come in handy for summarisation, either for a social media post or for introduction into other content. In fact, this is how I go much of the time. Nevertheless, I found another option: using Projects in the Claude web interface.

These allow you to store a prompt that you reuse a lot in the Project Knowledge panel. Otherwise, you need to supply a title and a description too. Once completed, you just add your text in there for the AI to do the rest. Title generation and text rewriting already are set up like this, and keywords could follow. It is a great way to reuse and refine prompts that you use a lot.

Observations from selected sessions of SAS Innovate 2024

SAS Innovate 2024 provided insight into evolving approaches to analytics modernisation, platform development and applied data science across multiple industries. This document captures observations from sessions addressing strategic platform migrations, unified analytics environments, enterprise integration patterns and practical applications in regulated sectors. The content reflects a discipline transitioning from experimental implementations to production-grade, business-critical infrastructure.

Strategic Platform Modernisation

A presentation from DNB Bank detailed the organisation's migration from SAS 9.4 to SAS Viya on Microsoft Azure. The strategic approach proved counter-intuitive: whilst SAS Viya supports both SAS and Python code seamlessly, DNB deliberately chose to rewrite their legacy SAS code library into Python. The rationale combined two business objectives. First, expanding the addressable talent market by tapping into the global Python developer pool. Second, creating a viable exit strategy from their primary analytics vendor, ensuring compliance with financial regulatory requirements to demonstrate realistic vendor transition options within 30 to 90 days.

This decision represents a fundamental shift in enterprise software value propositions. Competitive advantage no longer derives from creating vendor lock-in, but from providing powerful, stable and governed environments that fully embrace open-source tools. The winning strategy involves convincing customers to remain because the platform delivers undeniable value, not because departure presents insurmountable difficulty. This is something that signals the maturing of a market, where value flows through partnership rather than proprietary constraints.

Unified Analytics Environments

A healthcare analytics presentation addressed the persistent debate between low-code/no-code interfaces for business users and professional coding environments for data scientists. Two analysts tackled identical problems (predicting diabetes risk factors using a public CDC dataset) using different approaches within the same platform.

The low-code user employed SAS Viya's Model Studio, a visual interface. This analyst assessed the model for statistical bias against variables such as age and gender by selecting a configuration option, whereupon the platform automatically generated fairness statistics and visualisations.

The professional coder used SAS Viya Workbench, a code-first environment similar to Visual Studio Code. This analyst manually wrote code to perform identical bias assessments. However, direct code access enabled fine-tuning of variable interactions (such as age and cholesterol), ultimately producing a logistic regression model with marginally superior performance compared to the low-code approach.

The demonstration illustrated that the debate presents a false dichotomy. The actual value resides in unified platforms, enabling both personas to achieve exceptional productivity. Citizen data scientists can rapidly build and validate baseline models, whilst expert coders can refine those same models with advanced techniques and deploy them, all within a single ecosystem. This unified approach characterises disciplinary development, where focus shifts from tribal tool debates to collective problem-solving.

Analytics as Enterprise Infrastructure

Multiple architectural demonstrations illustrated analytics platforms evolving beyond sophisticated workbenches for specialists into the central nervous system of enterprise operations. Three distinct patterns emerged:

The AI Assistant Architecture: A demonstration featured a customer-facing AI assistant built with Azure OpenAI. When users interacted with the chatbot regarding credit risk, requests routed through Azure Logic App not to the large language model for decisions but to a SAS Intelligent Decisioning engine. The SAS engine functioned as the trusted decision core, executing business rules and models to generate real-time risk assessments, which returned to the chatbot for customer delivery. SAS provided not the interface but the automated decision engine.

The Digital Twin Pattern: A pharmaceutical use case described using historical data from penicillin manufacturing batches to train machine learning models. These models became digital twins of physical bioreactors. Rather than conducting costly and time-consuming physical experiments, researchers executed thousands of in silico simulated experiments, adjusting parameters in the model to discover the optimal recipe for maximising yield (the "Golden Batch").

The Microsoft 365 Automation Hub: A workflow demonstration showed SAS programmes functioning as critical nodes in Microsoft 365 ecosystems. The automated process involved SAS code accessing SharePoint folders, retrieving Excel files, executing analyses, generating new reports as Excel files and delivering those reports directly into Microsoft Teams channels for business users.

These patterns mark profound evolution. Analytics platforms are moving beyond sophisticated calculators for experts, becoming foundational infrastructure: the connective tissue enabling intelligent automation and integrating disparate systems such as cloud office suites, AI interfaces and industrial hardware into cohesive business processes. This evolution from specialised tool to core infrastructure clearly indicates analytics' growing maturity within enterprise contexts.

Applied Data Science in High-Stakes Environments

Whilst much data science narrative focuses on e-commerce recommendations or marketing optimisation, compelling applications tackle intensely human, high-stakes operational challenges. Heather Hallett, a former ICU nurse and healthcare industry consultant at SAS, presented on improving hospital efficiency.

She described the challenge of staffing intensive care units, where having appropriate nurse numbers with correct skills proves critical. Staffing decisions constitute "life and death decisions". Her team uses forecasting models (such as ARIMA) to predict patient demand and optimisation algorithms (including mixed-integer programming) to create optimal nurse schedules. The optimisation addresses more than headcount; it matches nurses' specific skills, such as certifications for complex assistive devices like intra-aortic balloon pumps, to forecasted needs of the sickest patients.

A second use case applied identical operational rigour to community care. Using the classic "travelling salesman solver" from optimisation theory, the team planned efficient daily routes for mobile care vans serving maximum numbers of patients in their homes, delivering essential services to those unable to reach hospitals easily.