TOPIC: GOOGLE CHROME

An unseen arsenal: How web developers can use specialised tools to build better websites

Modern web development takes place within an ecosystem of tools so precisely suited to individual tasks that they often go unnoticed by anyone outside the profession. These utilities, spanning performance analysers, security checkers and colour palette generators, form the backbone of a workflow that must balance speed, security and visual consistency. For an industry where user experience and technical efficiency are inseparable priorities, such tools are far from optional luxuries.

Performance Testing and Page Speed Analysis

The first hurdle most developers encounter is performance measurement, and several tools have established themselves as essential in this space. GTmetrix, Google PageSpeed Insights and WebPageTest each draw on Google's open-source Lighthouse framework to varying degrees, though each approaches the task differently.

A performance grade alongside separate scores for page speed and structural quality is what GTmetrix produces for any URL submitted to it. It measures Core Web Vitals, including Largest Contentful Paint (LCP), Total Blocking Time (TBT) and Cumulative Layout Shift (CLS), which are the same metrics Google uses as ranking signals in search. The tool can run tests from multiple global server locations and simulates a real browser loading your page, producing a waterfall chart and a video replay of the load process, so developers can identify precisely which elements are causing delays.

Maintained directly by Google, PageSpeed Insights analyses pages against both laboratory data generated through Lighthouse and real-world field data drawn from the Chrome User Experience Report (CrUX). It provides separate performance scores for mobile and desktop, which is significant given that Google confirmed page speed as a ranking factor for mobile searches in July 2018. Both GTmetrix and PageSpeed Insights go well beyond raw figures, mapping out a prioritised list of optimisations so that developers can address the most impactful issues first.

A different position in the toolkit is occupied by WebPageTest, originally created by Patrick Meenan and open-sourced in 2008, and acquired by Catchpoint in 2020. Rather than returning a simple score, it runs tests from a choice of locations across the globe using real browsers at actual connection speeds, and produces detailed waterfall charts that break down every individual network request. This makes it the tool of choice when the question is not just how fast a page is, but precisely why a particular element is slow.

One of the longer-established names in website speed testing, Pingdom offers a free tool that remains widely used for its accessible reporting. Tests can be run from seven global server locations, and results are presented in four sections: a waterfall breakdown, a performance grade, a page analysis and a historical record of previous tests. The page analysis breaks down asset sizes by domain and content type, which is useful for comparing the weight of CDN-served assets against those served directly. Pingdom is based on the YSlow open-source project and does not currently measure the Core Web Vitals metrics that Google uses as ranking signals, so it is best treated as a quick and readable first pass rather than a definitive audit.

Security and Infrastructure Diagnostics

Performance alone cannot sustain a trustworthy website, as a misconfigured certificate, an insecure resource or a flagged IP address can each undermine user confidence and search visibility. One of the most frustrating post-migration problems is the disappearance of the HTTPS padlock despite an SSL certificate being in place, and Why No Padlock? exists specifically to address it. The cause is almost always mixed content, where a page served over HTTPS loads at least one resource (an image, a script or a stylesheet) over plain HTTP. Why No Padlock? scans any HTTPS URL and returns a list of every insecure resource found, along with the HTML element responsible, making it straightforward to trace and resolve the problem. Google has used HTTPS as a ranking signal since 2014, so unresolved mixed content issues carry an SEO cost as well as a security one.

For traffic-level threats, AbuseIPDB operates as a community-maintained IP blacklist. Managed by Marathon Studios Inc., the project allows system administrators and webmasters to report IP addresses involved in malicious behaviour, including hacking attempts, spam campaigns, DDoS attacks and phishing, and to check any IP address against the database before acting on traffic from it. A free API is available for integration with server tools such as Fail2Ban, enabling automatic reporting and real-time checks.

Bot traffic and automated form submissions are a persistent nuisance for any site that accepts user input, and hCaptcha addresses this by presenting challenges that are straightforward for human visitors but reliably difficult for automated scripts. Operated by Intuition Machines, it positions itself as a privacy-focused alternative to reCAPTCHA, collecting minimal data and retaining no personally identifiable information beyond what is necessary to complete a challenge. It is compliant with GDPR, CCPA and several other international privacy frameworks, and holds both ISO 27001 and SOC 2 Type II certifications. A free tier is available, with a Pro plan covering 100,000 evaluations per month, and an Enterprise tier offering additional controls including data localisation and zero-PII processing modes.

Red Sift offers two distinct products that address different aspects of infrastructure security, both relevant to the day-to-day operation of a website. Red Sift OnDMARC automates the configuration and monitoring of DMARC, SPF, DKIM, BIMI and MTA-STS, which are the protocols that collectively prevent attackers from sending spoofed emails that appear to originate from a legitimate domain. This is the basis for most phishing and business email compromise (BEC) attacks, and OnDMARC guides teams to full enforcement typically within six to eight weeks. Red Sift Certificates Lite addresses a separate but equally critical concern, monitoring SSL/TLS certificates for upcoming expiry and alerting administrators seven days ahead of time. It is free for up to 250 certificates and has been formally recommended by Let's Encrypt as its preferred monitoring service, following the retirement of Let's Encrypt's own expiry notification emails. The product was built on the foundation of Hardenize, which Red Sift acquired in 2022, a company founded by Ivan Ristić, creator of SSL Labs.

Colour Management and Visual Design

A website's visual coherence depends heavily on colour consistency, and the distance between a palette sketched on paper and one that functions in code can be significant. With over two million active users, Coolors is a fast and intuitive palette generator built around a simple interaction: pressing the space bar produces a new five-colour palette derived from colour theory algorithms. The platform includes an accessibility checker that calculates contrast ratios against WCAG standards and a colour extractor that derives palettes from uploaded photographs. It also offers interoperability with Figma, Adobe Creative Suite and the Chrome browser. A free tier is available, with a Pro plan at approximately $3 per month for unlimited saving and export options.

A quite different approach is taken by Colormind, which uses a deep learning model based on Generative Adversarial Networks (GANs) to generate harmonious colour schemes. The model is trained on datasets drawn from photographs, films, popular art and website designs, and is updated daily with fresh material. A particularly useful feature allows users to preview how a generated palette would look applied to a website layout, which is a more direct test of practicality than viewing swatches in isolation. A REST API is available for personal and non-commercial use. For converting between colour formats, tools such as Color-Hex, RGBtoHex and the WebFX Hex to RGB converter bridge the gap between design decisions and code implementation, translating colour values in both directions between the hexadecimal and RGB formats that CSS requires.

Optimisation and Code Utilities

Lean, efficient code is a direct contributor to load speed, and unused CSS is a surprisingly common source of unnecessary page weight that PurifyCSS Online addresses by scanning a website's HTML and JavaScript source against its stylesheets to identify selectors that are never used. CSS frameworks such as Bootstrap or Tailwind ship with many utility classes, and most websites use only a small fraction of them. Removing the unused rules can reduce stylesheet file size substantially, which in turn shortens the time a browser spends processing styles before rendering a page. The online version requires no build pipeline or command-line tools, making it accessible to developers at any workflow stage.

Image compression is equally important, as unoptimised images are among the most common causes of slow load times. ImageCompressor handles JPEG, PNG, WebP, GIF and SVG files in the browser, applying lossy or lossless algorithms with adjustable quality settings to reduce file sizes without visible degradation, and processes everything locally, which means that no images are uploaded to an external server. Contact forms and directory listings on websites are a persistent target for spam harvesters, and Email Obfuscator encodes email addresses into a format that is readable by browsers but opaque to most automated scrapers, generating both a plain HTML entity version and a JavaScript-dependent alternative for stronger protection.

For websites that publish mathematical or scientific content, QuickLaTeX provides a practical solution to embedding equations in web pages without a local LaTeX installation. Authors write standard LaTeX expressions directly in their content, and the service renders them as high-quality images that are cached and returned via URL for embedding. Its companion WordPress plugin, WP QuickLaTeX, handles this process automatically within the editor, supporting inline formulas, numbered displayed equations and TikZ graphics.

Server Response and Infrastructure Monitoring

Infrastructure performance sits beneath the layer that most visitors ever see, yet it determines how quickly any content reaches a browser at all, and the Time to First Byte (TTFB) is the metric that captures this most directly. It measures the interval between a browser sending an HTTP request and receiving the first byte of data from the server, and ByteCheck exists solely to measure it. This metric captures the combined effect of DNS resolution time, TCP connection time, SSL negotiation time and server processing time. Google considers a TTFB of 200ms or below to be good, and Byte Check breaks the total down into each constituent step, so developers can identify precisely where delays are occurring. Slow TTFB is often a server-side issue, such as inadequate caching, an overloaded database or a lack of a content delivery network (CDN).

Analytics and Content Evaluation

The final layer of tooling concerns understanding what content a site serves and how it performs in context. Dandelion is a natural language processing API developed by SpazioDati that can extract entities, classify text and analyse the semantic content of web pages, which has applications in content tagging, SEO auditing and editorial quality control. A free tier, covering up to 1,000 API units per day, is available without a credit card, making it accessible for developers who need semantic analysis at low to moderate volume.

Quiet Workhorses of the Web

Individually, each of these tools addresses a specific and well-defined problem. Taken together, they form a coherent toolkit that covers the full lifecycle of a web project, from initial performance diagnosis through to deployment of a secure, efficiently coded and visually consistent site. They do not replace professional judgement but extend it, handling time-consuming checks and conversions that would otherwise consume the attention needed for more complex work. As websites grow in complexity and user expectations continue to rise, familiarity with this kind of specialist tooling becomes a practical necessity rather than an optional extra.

Comet and Atlas: Navigating the security risks of AI Browsers

The arrival of the ChatGPT Atlas browser from OpenAI on 21st October has lured me into some probing of its possibilities. While Perplexity may have launched its Comet browser first on 9th July, their tendency to put news under our noses in other places had turned me off them. It helps that the former is offered extra charge for ChatGPT users, while the latter comes with a free tier and an optional Plus subscription plan. My having a Mac means that I do not need to await Windows and mobile versions of Atlas, either.

Both aim to interpret pages, condense information and carry out small jobs that cut down the number of clicks. Atlas does so with a sidebar that can read multiple documents at once and an Agent Mode that can execute tasks in a semi-autonomous way, while Comet leans into shortcut commands that trigger compact workflows. However, both browsers are beset by security issues that give enough cause for concern that added wariness is in order.

In many ways, they appear to be solutions looking for problems to address. In Atlas, I found the Agent mode needed added guidance when checking the content of a personal website for gaps. Jobs can become too big for it, so they need everything broken down. Add in the security concerns mentioned below, and enthusiasm for seeing what they can do gets blunted. When you see Atlas adding threads to your main ChatGPT roster, that gives you a hint as to what is involved.

The Security Landscape

Both Comet and Atlas are susceptible to indirect prompt injection, where pages contain hidden instructions that the model follows without user awareness, and AI sidebar spoofing, where malicious sites create convincing copies of AI sidebars to direct users into compromising actions. Furthermore, demonstrations have included scenarios where attackers steal cryptocurrency and gain access to Gmail and Google Drive.

For instance, Brave's security team has described indirect prompt injection as a systemic challenge affecting the whole class of AI-augmented browsers. Similarly, Perplexity's security group has stated that the phenomenon demands rethinking security from the ground up. In a test involving 103 phishing attacks, Microsoft Edge blocked 53 percent and Google Chrome 47 percent, yet Comet blocked 7 percent and Atlas 5.8 percent.

Memory presents an additional attack surface because these tools retain information between sessions, and researchers have demonstrated that memory can be poisoned by carefully crafted content, with the taint persisting across sessions and devices if synchronisation is enabled. Shadow IT adoption has begun: within nine days of launch, 27.7 percent of enterprises had at least one Atlas download, with uptake in technology at 67 percent, pharmaceuticals at 50 percent and finance at 40 percent.

Mitigating the Risks

Sensibly, security practitioners recommend separating ordinary browsing from agentic browsing. Here, it helps that AI browsers are cut down items anyway, at least based on my experience of Atlas. Figuring out what you can do with them using public information in a read-only manner will be enough at this point. In any event, it is essential to keep them away from banking, health, personal accounts, credentials, payments and regulated data until security improves.

As one precaution, maintaining separate AI accounts could act as a boundary to contain potential compromises, though this does not address the underlying issue that prompt injection manipulates the agent's decision-making processes. With Atlas, disable Browser Memories and per-site visibility by default, with explicit opt-ins only on specific public sites. Additionally, use Agent Mode only when not logged into any accounts. Furthermore, do not import passwords or payment methods. With Comet, use narrowly scoped shortcuts that operate on public information and avoid workflows involving sign-ins, credentials or payments.

Small businesses can run limited pilots in non-sensitive areas with strict allow and deny lists, then reassess by mid-2026 as security hardens, while large enterprises should adopt a block-and-monitor stance while developing governance frameworks that anticipate safer releases in 2026 and 2027. In parallel, security teams should watch for circumvention attempts and prepare policies that separate public research from sensitive work, mandate safe defaults and prohibit connections to confidential systems. Finally, training is necessary because users need to understand the specific risks these browsers present.

How Competition Might Help

Established browser vendors are adding AI capabilities on top of existing security infrastructure. Chrome is integrating Gemini, and Edge is incorporating Copilot more tightly into the workflow. Meanwhile, Brave continues with a privacy-first stance through Leo, while Opera's Aria, Arc with Dia and SigmaOS reflect different approaches. Current projections suggest that major browsers will introduce safer AI features in the final quarter of 2025, that the first enterprise-ready capabilities will arrive in the first half of 2026 and that by 2027 AI-assisted browsing will be standard and broadly secure.

Competition from Chrome and Edge will drive AI assistance into more established security frameworks, while standalone AI browsers will work to address their security gaps. Mitigations for prompt injection and sidebar spoofing will likely involve layered approaches combining detection, containment and improved user interface signals. Until then, Comet and Atlas can provide productivity benefits in public-facing work and research, but their security posture is not suitable for sensitive tasks. Use the tools where the risk is acceptable, keep sensitive work in conventional browsers, and anticipate that safer versions will become standard over the next two years.

Get web links from Outlook emails and Teams chats to open in your web browser of choice

By default, web links from either Outlook (here, I am referring to the Classic version and not the newer web appliance version that Microsoft would like us all to use, though many think it to be feature-incomplete) or Teams open in Edge, which may not be everyone's choice of web browser. Many choose Google Chrome, while I mainly use Mozilla Firefox, with Brave being another option that I have.

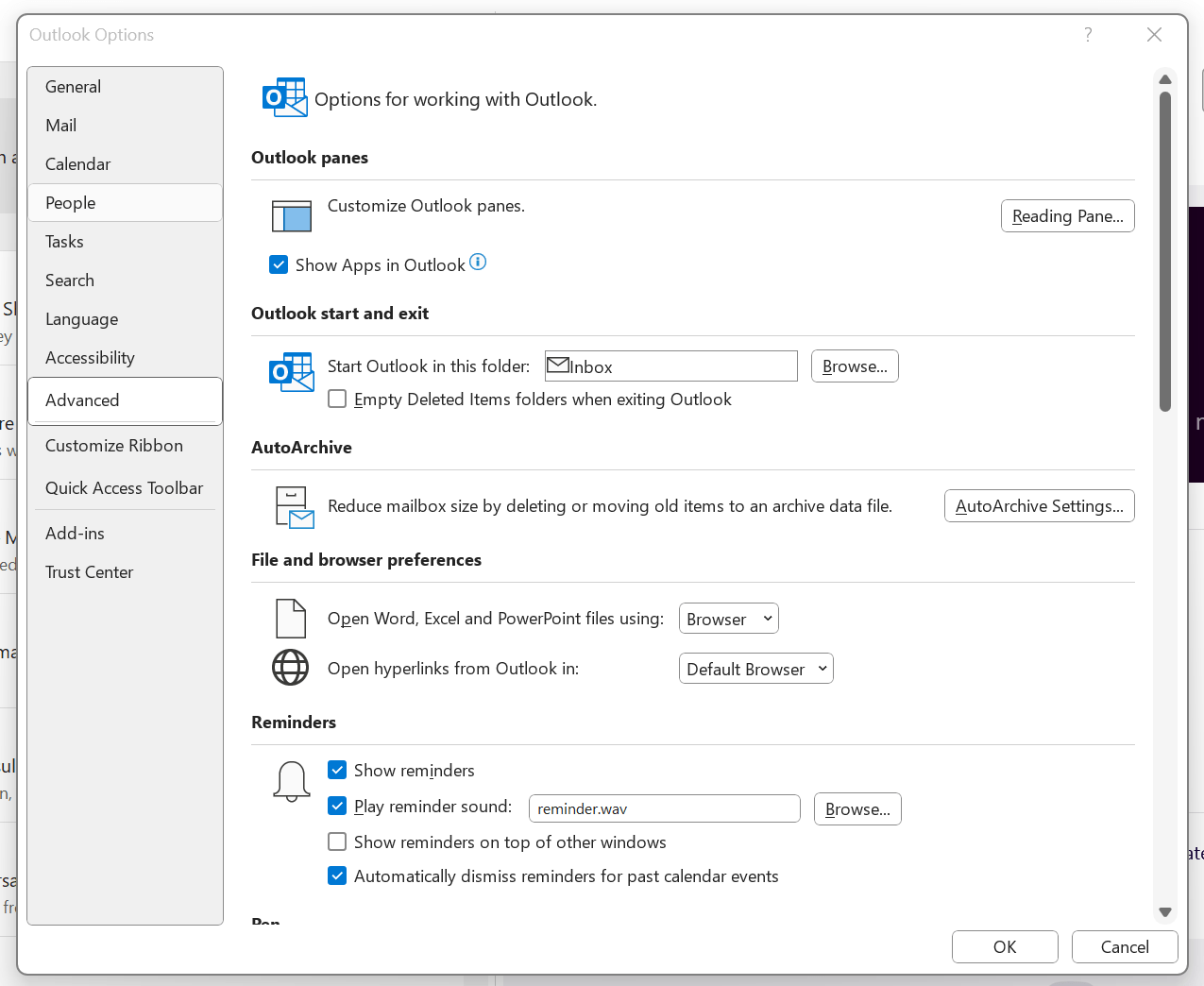

To get both Outlook and Teams to use your default system web browser, go to Outlook and navigate to File > Options > Advanced > File and browser preferences. Once there, look for the line with Open hyperlinks from Outlook in. The dropdown box will show Microsoft Edge by default, but there is another option: Default Browser. Choosing that will change things away from Edge to your chosen browser, assuming that you have set it by default using the Settings application.

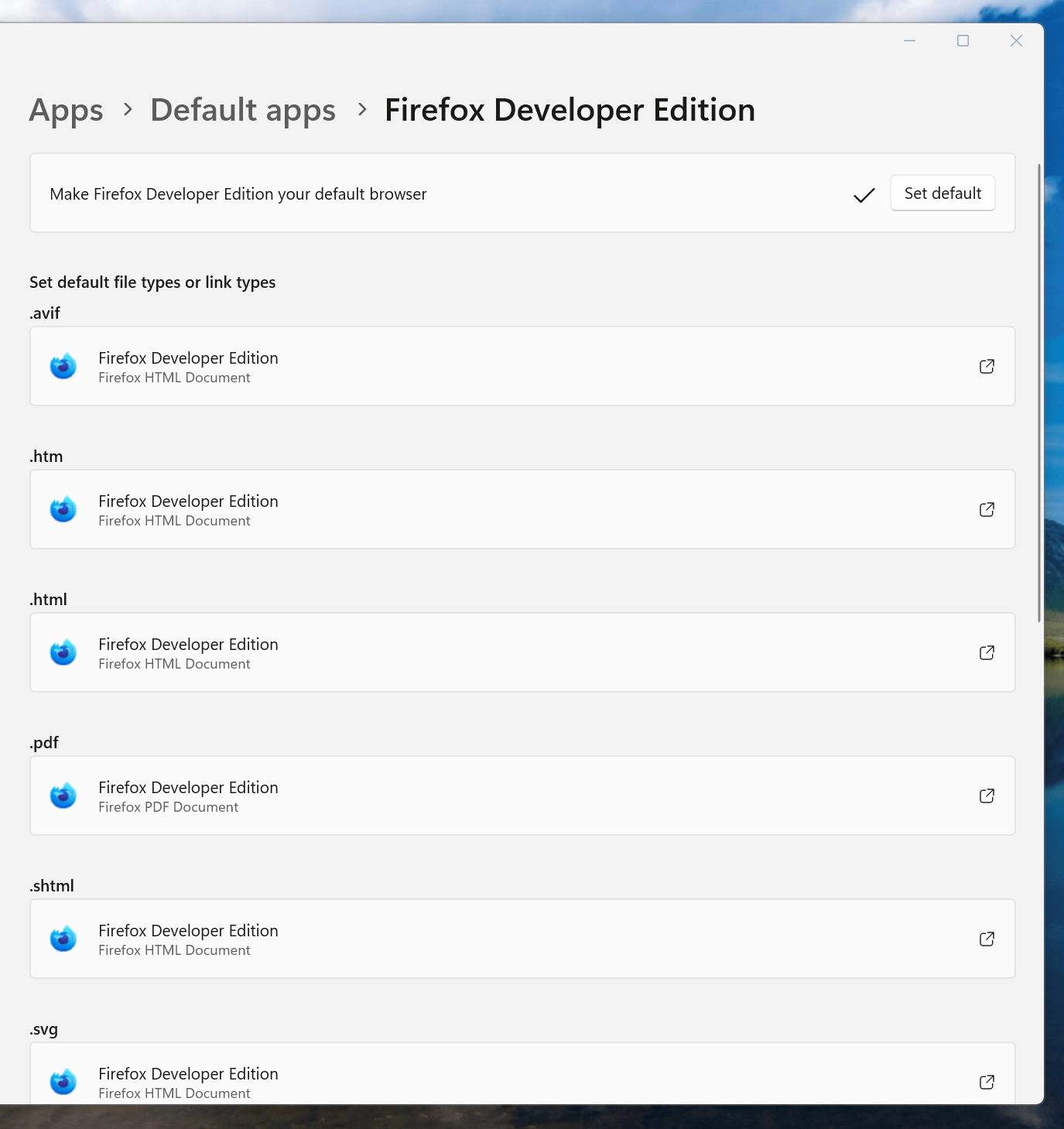

If you have not gone there, navigate to Apps > Default apps. Once there, find the entry for the browser that you want to use and click on the Set default button. You also will see a list of file types, where you may need to change the setting for those as well. Once the system default is sorted, that will be honoured by Outlook and Teams as well.

So you just need a web browser?

When Google announced that it was working on an operating system, it was bound to result in a frisson of excitement. However, a peek at the preview edition that has been doing the rounds confirms that Chrome OS is a very different beast from those operating systems to which we are accustomed. The first thing that you notice is that it only starts up the Chrome web browser. In this, it is like a Windows terminal server session that opens just one application. Of course, in Google's case, that one piece of software is the gateway to its usual collection of productivity software like Gmail, Calendar, Docs & Spreadsheets and more. Then, there are offerings from others too, with Microsoft just beginning to come into the fray to join Adobe and many more. As far as I can tell, all files are stored remotely, so I reckon that adding the possibility of local storage and management of those local files would be a useful enhancement.

With Chrome OS, Google's general strategy starts to make sense. First create a raft of web applications, follow them up with a browser and then knock up an operating system. It just goes to show that Google Labs doesn't simply churn out stuff for fun, but that there is a serious point to their endeavours. In fact, you could say that they sucked us in to a point along the way. Speaking for myself, I may not entrust all of my files to storage in the cloud, yet I am perfectly happy to entrust all of my personal email activity to Gmail. It's the widespread availability and platform independence that has done it for me. For others spread between one place and another, the attractions of Google's other web apps cannot be understated. Maybe, that's why they are not the only players in the field either.

With the rise of mobile computing, that kine of portability is the opportunity that Google is trying to use to its advantage. For example, mobile phones are being used for things now that would have been unthinkable a few years back. Then, there's the netbook revolution started by Asus with its Eee PC. All of this is creating an ever internet connected bunch of people, so having devices that connect straight to the web like they would with Chrome OS has to be a smart move. Some may decry the idea that Chrome OS will be available on a device only basis, but I suppose they have to make money from this too; search can only pay for so much, and they have experience with Android too.

There have been some who wondered about Google's activities killing off Linux and giving Windows a good run for its money; Chrome OS seems to be a very different animal to either of these. It looks as if it is a tool for those on the move, an appliance, rather than the pure multipurpose tools that operating systems usually are. If there is a symbol of what an operating system usually means for me, it's the ability to start with a bare desktop and decide what to do next. Transparency is another plus point, with the Linux command line having that in spades. For those who view PC's purely as means to get things done, such interests are peripheral, and it is for these that the likes of Chrome OS has been created. In other words, the Linux community need to keep an eye on what Google is doing but should not take fright because there are other things that Linux always will have as unique selling points. Even though the same sort of thing applies to Windows too, Microsoft's near stranglehold on the enterprise market will take a lot of loosening, perhaps keeping Chrome OS in the consumer arena. Counterpoints to that include the use GMail for enterprise email by some companies and the increasing footprint of web-based applications, even bespoke ones, in business computing. In fact, it's the latter that can be blamed for any tardiness in Internet Explorer development. In summary, Chrome OS is a new type of thing rather than a replacement for what's already there. We may find that co-existence is how things turn out, but what it means for Linux in the netbook market is another matter. Only time will tell on that one.

A late "advance" sighting?

Somewhat infuriatingly, Google released its own browser, Chrome, into the wild near the end of last year, though only for Windows. My experiences with it on that platform are that it works smoothly, albeit without many of the bells and whistles that can be got for Firefox. While an unofficial partial port was achieved using Crossover Chromium and there is the Chromium project with all its warnings and the possibility to add a repository for its wares to Ubuntu's software sources, we have been tantalised rather than served so far. However, that was recently bettered by the release of early access versions. In reality, these can be said to be alpha versions so not everything works, but it's still Chrome and without the need for Windows or WINE. The rendering engine, most importantly, seems to be the equal of what you get on Windows, while ancillary functions like bookmark handling seem incomplete. In summary, the currently available deb packages are a work in progress, yet that's better than not having anything at all.