Enlarging a VirtualBox VDI virtual disk

20th December 2018It is amazing how the Windows folder manages to grow on your C drive and on in a Windows 7 installation was the cause of my needing to expand the VirtualBox virtual machine VDI disk on which it was installed. After trying various ways to cut down the size, an enlargement could not be avoided. In all of this, it was handy that I had a recent backup for restoration after any damage.

The same thing meant that I could resort to enlarging the VDI file with more peace of mind than otherwise might have been the case. This needed use of the command line once the VM was shut down. The form of the command that I used was the following:

VBoxManage modifyhd <filepath/filename>.vdi --resize 102400

It appears that this also would work on a Windows host but mine was Linux and it did what I needed. The next step was to attach it to an Ubuntu VM and use GParted to expand the main partition to fill the newly available space. That does not mean that it takes up 100 GiB on my system just yet because these things can be left to grow over time and there is a way to shrink them too if you ever need to do just that. As ever, having a backup made before any such operation may have its uses if anything goes awry.

Sorting out sluggish start-up and shutdown times in Linux Mint 19

9th August 2018The Linux Mint team never pushes anyone into upgrading to the latest version of their distribution but curiosity often is strong enough an impulse to make me do just that. When it brings me across some rough edges, then the wisdom of leaving things alone is evident. Nevertheless, doing so also brings its share of learning and that is what I am sharing in this post. It also also me to collect a number of titbits that may be of use to others.

Again, I went with the in-situ upgrade option though the addition of the Timeshift backup tool means that it is less frowned upon than once would have been the case. It worked well too part from slow start-up and shutdown times so I set about track down the causes on the two machines that I have running Linux Mint. As it happens, the cause was different on each machine.

On one PC, it was networking that holding up things. The cause was my specifying a fixed IP address in /etc/network/interfaces instead of using the Network Settings GUI tool. Resetting the configuration file back to its defaults and using the Cinnamon settings interface took away the delays. It was inspecting /var/log/boot.log that highlighted problem so that is worth checking if I ever encounter slow start times again.

As I mentioned earlier, the second PC had a very different problem though it also involved a configuration file. What had happened was that /etc/initramfs-tools/conf.d/resume contained the wrong UUID for my system’s swap drive so I was seeing messages like the following:

W: initramfs-tools configuration sets RESUME=UUID=<specified UUID for swap partition>

W: but no matching swap device is available.

I: The initramfs will attempt to resume from <specified file system location>

I: (UUID=<specified UUID for swap partition>)

I: Set the RESUME variable to override this.

Correcting the file and executing the following command fixed the issue by updating the affected initramfs image for all installed kernels and speeded up PC start-up times:

sudo update-initramfs -u -k all

Though it was not a cause of system sluggishness, I also sorted another message that I kept seeing during kernel updates and removals on both machines. This has been there for a while and causes warning messages about my system locale not being recognised. The problem has been described elsewhere as follows: /usr/share/initramfs-tools/hooks/root_locale is expecting to see individual locale directories in /usr/lib/locale but locale-gen is configured to generate an archive file by default. Issuing the following command sorted that:

sudo locale-gen --purge --no-archive

Following these, my new Linux Mint 19 installations have stabilised with more speedy start-up and shutdown times. That allows me to look at what is on Flathub to see what applications and if they get updated to the latest version on an ongoing basis. That may be a topic for another entry on here but the applications that I have tried work well so far.

Compressing a VirtualBox VDI file for a Linux guest

6th June 2016In a previous posting, I talked about compressing a virtual hard disk for a Windows guest system running in VirtualBox on a Linux system. Since then, I have needed to do the same for a Linux guest following some housekeeping. The Linux distribution used is Debian so the instructions are relevant to that and maybe its derivatives such as Ubuntu, Linux Mint and their kind.

While there are other alternatives like dd, I am going to stick with a utility named zerofree to overwrite the newly freed up disk space with zeroes to aid compression later on in the process for this and the first step is to install it using the following command:

apt-get install zerofree

Once that has been completed, the next step is to unmount the relevant disk partition. Luckily for me, what I needed to compress was an area that I reserved for synchronisation with Dropbox. If it was the root area where the operating system files are kept, a live distro would be needed instead. In any event, the required command takes the following form with the mount point being whatever it is on your system (/home, for instance):

sudo umount [mount point]

With the disk partition unmounted, zerofree can be run by issuing a command that looks like this:

zerofree -v /dev/sdxN

Above, the -v switch tells zerofree to display its progress and a continually updating percentage count tells you how it is going. The /dev/sdxN piece is generic with the x corresponding to the letter assigned to the disk on which the partition resides (a, b, c or whatever) and the N is the partition number (1, 2, 3 or whatever; before GPT, the maximum was 4). Putting all this together, we get an example like /dev/sdb2.

Once, that had completed, the next step is to shut down the VM and execute a command like the following on the host Linux system ([file location/file name] needs to be replaced with whatever applies on your system):

VBoxManage modifyhd [file location/file name].vdi --compact

With the zero filling in place, there was a lot of space released when I tried this. While it would be nice for dynamic virtual disks to reduce in size automatically, I accept that there may be data integrity risks with those so the manual process will suffice for now. It has not been needed that often anyway.

Wiping of hard drives with Linux

2nd December 2013More than a decade of computer upgrades and rebuilds can leave obsolete kit in your hands and the arrival of legislation controlling the dumping of electronic goods during this time can leave one wondering how anyone can dispose of them. Thankfully, I discovered that the local council refuse site only a few miles away from me accepts such things for recycling and saw me a good few times over the last summer with obsolete and non-working gadgets that has stayed with me far too long. Some were as bulky as a computer monitor and a printer but others were relatively diminutive.

Disposing of non-working and utterly obsolete equipment is an easy choice but I find this is harder when a device still works as intended and even might have a use yet. When you realise that computer motherboards still come with PS/2, floppy and IDE ports, things get trickier. My Gigabyte Z87-HD3 mainboard just has one PS/2 when predecessors would have had two and the same applies to IDE sockets and there still is a floppy drive socket on there too, a surprising sight for anyone used to thinking that such things are utterly outmoded these days. So, PC technology isn’t relinquishing backwards compatibility just yet since that mainboard is part of a system with an Intel Core i5-4670K CPU and 24 GB of RAM on there.

Even with that presence of an IDE port, I was not tempted to use leftover 10 GB and 20GB hard drives that I have had for just over a decade. Ten years ago, that sort of capacity would been respectable were it not for our voracious appetite for data storage thanks to photography, video and music. Apart from the size constraints, the speed of those drives cannot compare well with what we have today either and I quickly saw that when I replaced a Samsung 160 HD of a similar age with a Samsung SSD.

The result of this line of thought was that I was minded to recycle the drives so I started to think about wiping and Linux has a good tool for this in the form of the dd command. It can overwrite data on the disks so as to render the information virtually irretrievable. Also, Linux has a number of dummy devices that can supply junk data for overwriting purposes. They are like /dev/null which is used to suppress the issuing of output to the command. The first is /dev/zero which supplies octal zeros and I have used this. However, there also is /dev/random and /dev/urandom for those wanting a more random element to the overwriting.

To overwrite data on a disk with zeroes while having feedback on progress, the following command achieves the required result:

sudo dd if=/dev/zero | pv | sudo dd of=/dev/sdd bs=16M

The whole operation needs to be executed with root privileges and the if parameter of dd specifies the input data and this is sent to a pv command that shows a progress bar that dd would not produce by itself while sending the output on to another dd command with the disk to be overwritten specified using the of parameter. The bs parameter in that second dd command specifies the block size for the disk writing job. Unfortunately, pv is not installed by default so you need to add it yourself. On a Debian, Ubuntu or Linux Mint system, the command is the following:

sudo apt-get install pv

That pv sandwich also is invaluable for those times when dd is needed to copy partitions between different physical or virtual (in a virtual machine) disks. Without it, you might wonder what exactly is happening in the silence and that especially is concerning when you are retrying an operation that failed previously and it takes a while to complete each time.

On Upgrading to Linux Mint 11

31st May 2011For a Linux distribution that focuses on user-friendliness, it does surprise me that Linux Mint offers no seamless upgrade path. In fact, the underlying philosophy is that upgrading an operating system is a risky business. However, I have been doing in-situ upgrades with both Ubuntu and Fedora for a few years without any real calamities. A mishap with a hard drive that resulted in lost data in the days when I mainly was a Windows user places this into sharp relief. These days, I am far more careful but thought nothing of sticking a Fedora DVD into a drive to move my Fedora machine from 14 to 15 recently. Apart from a few rough edges and the need to get used to GNOME 3 together with making a better fit for me, there was no problem to report. The same sort of outcome used to apply to those online Ubuntu upgrades that I was accustomed to doing.

The recommended approach for Linux Mint is to back up your package lists and your data before the upgrade. Doing the former is a boon because it automates adding the extras that a standard CD or DVD installation doesn’t do. While I did do a little backing up of data, it wasn’t total because I know how to identify my drives and take my time over things. Apache settings and the contents of MySQL databases were my main concern because of where these are stored.

When I was ready to do so, I popped a DVD in the drive and carried out a fresh installation into the partition where my operating system files are kept. Being a Live DVD, I was able to set up any drive and partition mappings with reference to Mint’s Disk Utility. What didn’t go so well was the GRUB installation, and it was due to the choice that I made on one of the installation screens. Despite doing an installation of version 10 just over a month ago, I had overlooked an intricacy of the task and placed GRUB on the operating system files partition rather than at the top level for the disk where it is located. Instead of trying to address this manually, I took the easier and more time-consuming step of repeating the installation like I did the last time. If there was a graphical tool for addressing GRUB problems, I might have gone for that instead, but am left wondering at why there isn’t one included at all. Maybe it’s something that the people behind GRUB should consider creating unless there is one out there already about which I know nothing.

With the booting problem sorted, I tried logging in only to find a problem with my desktop that made the system next to unusable. It was back to the DVD and I moved many of the configuration files and folders (the ones with names beginning with a “.”) from my home directory in the belief that there might have been an incompatibility. That action gained me a fully usable desktop environment but I now think that the cause of my problem may have been different to what I initially suspected. Later I discovered that ownership of files in my home area elsewhere wasn’t associated with my user ID though there was no change to it during the installation. As it happened, a few minutes with the chown command were enough to sort out the permissions issue.

The restoration of the extra software that I had added beyond what standardly gets installed was took its share of time but the use of a previously prepared list made things so much easier. That it didn’t work smoothly because some packages couldn’t be found the first time around, so another one was needed. Nevertheless, that is nothing compared to the effort needed to do the same thing by issuing an installation command at a time. Once the usual distribution software updates were in place, all that was left was to update VirtualBox to the latest version, install a Citrix client and add a PHP plugin to NetBeans. Then, next to everything was in place for me.

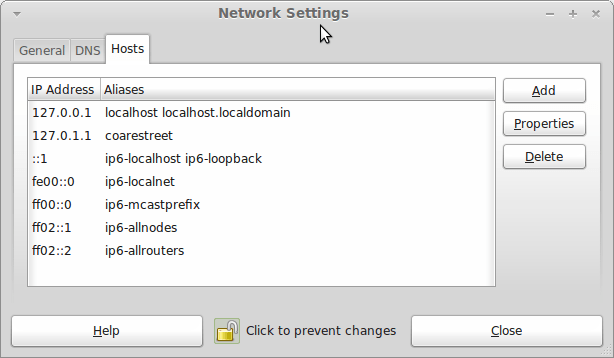

Next, Apache settings were restored as were the databases that I used for offline web development. That nearly was all that was needed to get offline websites working but for the need to add an alias for localhost.localdomain. That required installation of the Network Settings tool so that I could add the alias in its Hosts tab. With that out of the way, the system had been settled in and was ready for real work.

In light of some of the glitches that I saw, I can understand the level of caution regarding a more automated upgrade process on the part of the Linux Mint team. Even so, I still wonder if the more manual alternative that they have pursued brings its own problems in the form of those that I met. The fact that the whole process took a few hours in comparison to the single hour taken by the in-situ upgrades that I mentioned earlier is another consideration that makes you wonder if it is all worth it every six months or so. Saying that, there is something to letting a user decide when to upgrade rather than luring one along to a new version, a point that is more than pertinent in light of the recent changes made to Ubuntu and Fedora. Whichever approach you care to choose, there are arguments in favour as well as counterarguments too.

Restoring the MBR for Windows 7

25th November 2010During my explorations of dual-booting of Windows 7 and Ubuntu 10.10, I ended up restoring the master boot record (MBR) so that Windows 7 could load again or to find out if it wouldn’t start for me. The first hint that came to me when I went searching was the bootsect command but this only updates the master boot code on the partition so it did nothing for me. What got things going again was the bootrec command.

To use either of these, I needed to boot from a Windows 7 installation DVD. With my Toshiba Equium laptop, I needed to hold down the F12 key until I was presented with a menu that allowed me to choose from what drive I wanted to boot the machine, the DVD drive in this case. Then, the disk started and gave me a screen where I selected my location and moved to the next one where I selected the Repair option. After that, I got a screen where my Windows 7 installation was located. Once that was selected, I moved on to another screen from I started a command line session. Then, I could issue the commands that I needed.

bootsect /nt60 C:

This would repair the boot sector on the C: drive in a way that is compatible with BOOTMGR. This wasn’t enough for me but was something worth trying anyway in case there was some corruption.

bootrec /fixmbr

bootrec /fixboot

The first of these restores the MBR and the second sorts out the boot sector on the system drive (where the Windows directory resides on your system. In the event, I ran both of these and Windows restarted again, proving that it had come through disk partition changes without a glitch, though CHKDISK did run in the process but that’s understandable. There’s another option for those wanting to get back a boot menu and here it is:

bootrec /rebuildbcd

Though I didn’t need to do so, I ran that too but later used EasyBCD to remove the boot menu from the start-up process because it was surplus to my requirements. That’s a graphical tool that has gained something of a reputation since Microsoft dispensed with the boot.ini file that came with Windows XP for later versions of the operating system.

Manually adding an entry for Windows 7 to an Ubuntu GRUB2 menu

21st November 2010A recent endeavour of mine has been to set up a dual-booting arrangement on my Toshiba Equium laptop with Ubuntu 10.10 and Windows 7 side by side on there. However, unlike the same attempt with my Asus Eee PC where Windows XP coexists with Ubuntu, there was no menu entry on the GRUB (I understand that Ubuntu has had version 2 of this since 9.04 though the internal version is of the form 1.9x; you can issue grub-install -v at the command line to find out what version you have on your system) menu afterwards. Thankfully, I eventually figured out how to do this and the process is shared here in a more coherent order than the one in which I discovered all the steps.

The first step is to edit /etc/grub.d/40_custom (using SUDO) and add the following lines to the bottom of the file:

menuentry 'Windows 7' {

set root='(hd0,msdos2)'

chainloader +1

}

Since the location of the Windows installation can differ widely, I need to explain the “set root” line because (hd0,msdos2) refers to /dev/sda2 on my machine. More generally, hd0 (or /dev/sda elsewhere) refers to the first hard disk installed in any PC with hd1 (or /dev/sdb elsewhere) being the second and so on. While I was expecting to see entries like (hd0,6) in /boot/grub/grub.cfg, what I saw were ones like (hd0,msdos6) instead with the number in the text after the comma being the partition identifier; 1 is the first (sda1), 2 (sda2) is the second and so on. The next line (staring with chainloader) tells GRUB to load the first sector of the Windows drive so that it can boot. After all that decoding, my final remark on what’s above is a simple one: the text “Windows 7” is what will appear in the GRUB menu so you can change this as you see fit.

After saving 40_custom, the next step is to issue the following command to update grub.cfg:

sudo update-grub2

Once that has done its business, then you can look into /boot/grub/grub.cfg to check that the text added into 40_custom has found its way in there. That is important because this is the file read by GRUB2 when it builds the menu that appears at start-up time. A system reboot will prove conclusively that the new entry has been added successfully. Then, there’s the matter of selectively to see if Windows loads properly like it did for me, once I chose the correct disk partition for the menu entry, that is!

A look at Slackware 13.0

5th June 2010Some curiosity has come upon me and I have been giving a few Linux distros a spin in fVirtualBox virtual machines. One was Slackware and I recall a fellow university student using it in the mid/late 1990’s. Since then, my exploration took me into Redhat, SuSE, Mandrake and eventually to Ubuntu, Debian and Fedora. All of that bypassed Slackware so it was to give the thing a look.

While the current version is 13.1, it was 13.0 that I had to hand so I had a go with that. In many ways, the installation was a flashback to the 1990’s and I can see it looking intimidating to many computer users with its now old-fashioned installation GUI. If you can see through that though, the reality is that it isn’t too hard to install.

After all, the DVD was bootable. However, it did leave you at a command prompt and I can see that throwing many. The next step is to use cfdisk to create partitions (at least two are needed, swap and normal). Once that is done, it is time to issue the command setup and things look more graphical again. I picked the item for setting the locale of the keyboard and everything followed from there but there is a help option too for those who need it. If you have installed Linux before, you’ll recognise a lot of what you see. It’ll finish off the set up of disk partitions for you and supports ext4 too; it’s best not to let antique impressions fool you. For most of the time, I stuck with defaults and left it to perform a full installation with KDE as the desktop environment. If there is any real criticism, it is the absence of an overall progress bar to see where it is with package installation.

Once the installation was complete, it was time to restart the virtual machine and I found myself left at the command prompt. Only the root user was set up during installation so I needed to add a normal user too. Issuing startx was enough to get me into KDE (along with included alternatives like XCFE, there is a community build using GNOME too) for that but I wanted to have that loading automatically. To fix that, you need to edit /etc/inittab to change the default run level from 3 to 4 (hint: look for a line with id:3:initdefault: in it near the top of the file and change that; the file is well commented so you can find your way around it easily without having to look for specific esoteric test strings).

After all this, I ended up with a usable Slackware 130.0 installation. Login screens have a pleasing dark theme by default while the desktop is very blue. There may be no OpenOffice but KOffice is there in its place and Seamonkey is an unusual inclusion along with Firefox. It looks as if it’ll take a little more time to get to know Slackware but it looks good so far; I may even go about getting 13.1 to see how things might have changed and report my impressions accordingly. Some will complain about the rough edges that I describe here but comments about using Slackware to learn about Linux persist. Maybe, Linux distributions are like camera film; some are right for you and some aren’t. Personally, I wouldn’t thrust Slackware upon a new Linux user if they have to install it themselves but it’s not at all bad for that.

Rough?

11th November 2009Was it because Canonical and friends kept Ubuntu in such a decent state from 8.04 through to 9.04 that things went a little quiet in the blogosphere on the subject of the well-known Linux distribution? If so, 9.10 might be proving more of a talking point and you have to wonder if this is such a good thing with the appearance of Windows 7 on the scene. Looking on the bright side, 10.04 will be an LTS release so there is some chance that any rough edges that are on display now could be resolved by next April. Even so, it might have been better not to see anything so obvious at all.

In truth, Ubuntu always has had its gaps and I have seen a few of their ilk over the last two years. Of these, a few have triggered postings on here. In fact, issues with accessing the BBC iPlayer still bring a goodly number of folk to this website. That may just be a matter of grabbing RealPlayer, now helpfully available as a DEB package, from the requisite place on the web and ensuring that Ubuntu-Restricted-Extras is in place too but you have to know that in the first place. Even so, unexpected behaviours like Palimpsest seeing every partition on a disk as a different drive and SIL Raid mappings being seen for hard drives that used to live on the main home PC that bit the dust earlier this year; it only happens on one of the machines that I have running Ubuntu so it may be hardware thing and newly added hard drive uses none of the SIL mapping either. Perhaps more seriously (is it something that a new user should be encountering?), a misfiring variant of Brasero had me moving to K3b. Then UFRaw was sluggish in batch but that’s nothing that having a Debian VM won’t overcome. Rough edges like these do get you asking if 9.10 was ready for the big time while making you reluctant to recommend it to mainstream users like my brother.

The counterpoint to the above is that 9.10 includes a host of under the bonnet changes like the introduction of Ext4 hard drive formatting, Xsplash to allow the faster system loading to occur unseen and GNOME 2.28. To someone looking in from outside like me, that looks like a lot of work and might explain the ingress of the annoyances that I have seen. Add to that the fact that we are between Debian releases so things like the optimised packaging of ImageMagick or UFRaw may not be so high up the list of the things to do, especially with the more general speed optimisations that were put in place for 9.10. With 10.04 set to be an LTS release so I’d be hoping that consolidation is the order of the day over the next five or six months but it seems to be the inclusion of new features and other such progress that get magazine reviewers giving higher ratings (Linux Format has given it a mark of 9 out of 10). With the mooted inclusion of GNOME 3 and its dramatically different interface in 10.10, they should get their fill of that. However, I’d like to see some restraint for the take of a smooth transition from the familiar GNOME 2.x to the new. If GNOME 3 stays very like its alpha builds, then the question as how users will take to it arises. Of course, there’s some time yet before we see GNOME 3 and, having seen how the Ubuntu developers transformed GNOME 2.28, I wouldn’t be surprised if the impact of any change could be dulled.

In summary, my few weeks with Ubuntu 9.10 as my main OS have thrown up no major roadblocks that would cause me to look at moving elsewhere; Fedora would be tempting if that situation were to arise. The irritations that I have seen are more like signs of a lack of polish and remain peripheral to day-to-day working if you discount CD/DVD burning. To be honest, there always have been roughnesses in Ubuntu but has the lack of sizeable change spoilt us? Whatever about how things feel afterwards, big changes can mean new problems to resolve and inspire blog posts describing any solutions so it’s not all bad. If that’s what Canonical wants to see, they might get it and the year ahead looks as if it is going to be an interesting one after a recent quieter period.

Adding a new hard drive to Ubuntu

19th January 2009This is a subject that I thought that I had discussed on this blog before but I can’t seem to find any reference to it now. I have discussed the subject of adding hard drives to Windows machines a while back so that might explain what I was under the impression that I was. Of course, there’s always the possibility that I can’t find things on my own blog but I’ll go through the process.

What has brought all of this about was the rate at which digital images were filling my hard disks. Even with some housekeeping, I could only foresee the collection growing so I went and ordered a 1TB Western Digital Caviar Green Power from Misco. City Link did the honours with the delivery and I can credit their customer service with regard to organising delivery without my needing to get to the depot to collect the thing; it was a refreshing experience that left me pleasantly surprised.

For the most of the time, hard drives that I have had generally got on with the job there was one experience that has left me wary. Assured by good reviews, I went and got myself an IBM DeskStar and its reliability didn’t fill me with confidence and I will not touch their Hitachi equivalents because of it (IBM sold their hard drive business to Hitachi). This was a period in time when I had a hardware faltering on me with an Asus motherboard putting me off that brand around the same time as well (I now blame it for going through a succession of AMD Athlon CPU’s). The result is that I have a tendency to go for brands that I can trust from personal experience and both Western Digital falls into this category (as does Gigabyte for motherboards), hence my going for a WD this time around. That’s not to say that other hard drive makers wouldn’t satisfy my needs since I have had no problems with disks from Maxtor or Samsung but Ill stick with those makers that I know until they leave me down, something that I hope never happens.

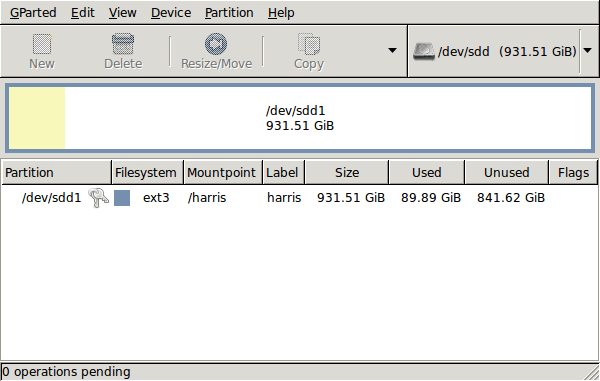

GParted running on Ubuntu

Anyway, let’s get back to installing the hard drive. The physical side of the business was the usual shuffle within the PC to add the SATA drive before starting up Ubuntu. From there, it was a matter of firing up GParted (System -> Administration -> Partition Editor on the menus if you already have it installed). The next step was to find the new empty drive and create a partition table on it. At this point, I selected msdos from the menu before proceeding to set up a single ext3 partition on the drive. You need to select Edit -> Apply All Operations from the menus set things into motion before sitting back and waiting for GParted to do its thing.

After the GParted activities, the next task is to set up automounting for the drive so that it is available every time that Ubuntu starts up. The first thing to be done is to create the folder that will be the mount point for your new drive, /newdrive in this example. This involves editing /etc/fstab with superuser access to add a line like the following with the correct UUID for your situation:

UUID=”32cf775f-9d3d-4c66-b943-bad96049da53″ /newdrive ext3 defaults,noatime,errors=remount-ro

You can can also add a comment like “# /dev/sdd1” above that so that you know what’s what in the future. To get the actual UUID that you need to add to fstab, issue a command like one of those below, changing /dev/sdd1 to what is right for you:

sudo vol_id /dev/sdd1 | grep “UUID=” /* Older Ubuntu versions */

sudo blkid /dev/sdd1 | grep “UUID=” /* Newer Ubuntu versions */

This is the sort of thing that you get back and the part beyond the “=” is what you need:

ID_FS_UUID=32cf775f-9d3d-4c66-b943-bad96049da53

Once all of this has been done, a reboot is in order and you then need to set up folder permissions as required before you can use the drive. This part gets me firing up Nautilus using gksu and adding myself to the user group in the Permissions tab of the Properties dialogue for the mount point (/newdrive, for example). After that, I issued something akin to the following command to set global permissions:

chmod 775 /newdrive

With that, I had completed what I needed to do to get the WD drive going under Ubuntu. After that IBM DeskStar experience, the new drive remains on probation but moving some non-essential things on there has allowed me to free some space elsewhere and carry out a reorganisation. Further consolidation will follow but I hope that the new 931.51 GiB (binary gigabytes or 1024*1024*1024 rather the decimal gigabytes (1,000,000,000) preferred by hard disk manufacturers) will keep me going for a good while before I need to add extra space again.